# SDK and API Compatibility Guide

Source: https://docs.honcho.dev/changelog/compatibility-guide

Compatibility guide for Honcho's SDKs and API

This guide helps you understand which versions of Honcho's API are compatible with which SDK versions.

## Version Compatibility

### Honcho API v2.4.2 (Current)

**Compatible Version:** v1.5.0

Install with:

```bash theme={null}

npm install @honcho-ai/sdk@1.5.0

```

**Compatible Version:** v1.5.0

Install with:

```bash theme={null}

pip install honcho-ai==1.5.0

```

## Version Compatibility Table

| Honcho API Version | TypeScript SDK | Python SDK |

| ------------------ | -------------- | ---------- |

| v2.4.2 (Current) | v1.5.0 | v1.5.0 |

| v2.4.1 | v1.5.0 | v1.5.0 |

| v2.4.0 | v1.5.0 | v1.5.0 |

| v2.3.3 | v1.4.1 | v1.4.1 |

| v2.3.2 | v1.4.0 | v1.4.0 |

| v2.3.1 | v1.4.0 | v1.4.0 |

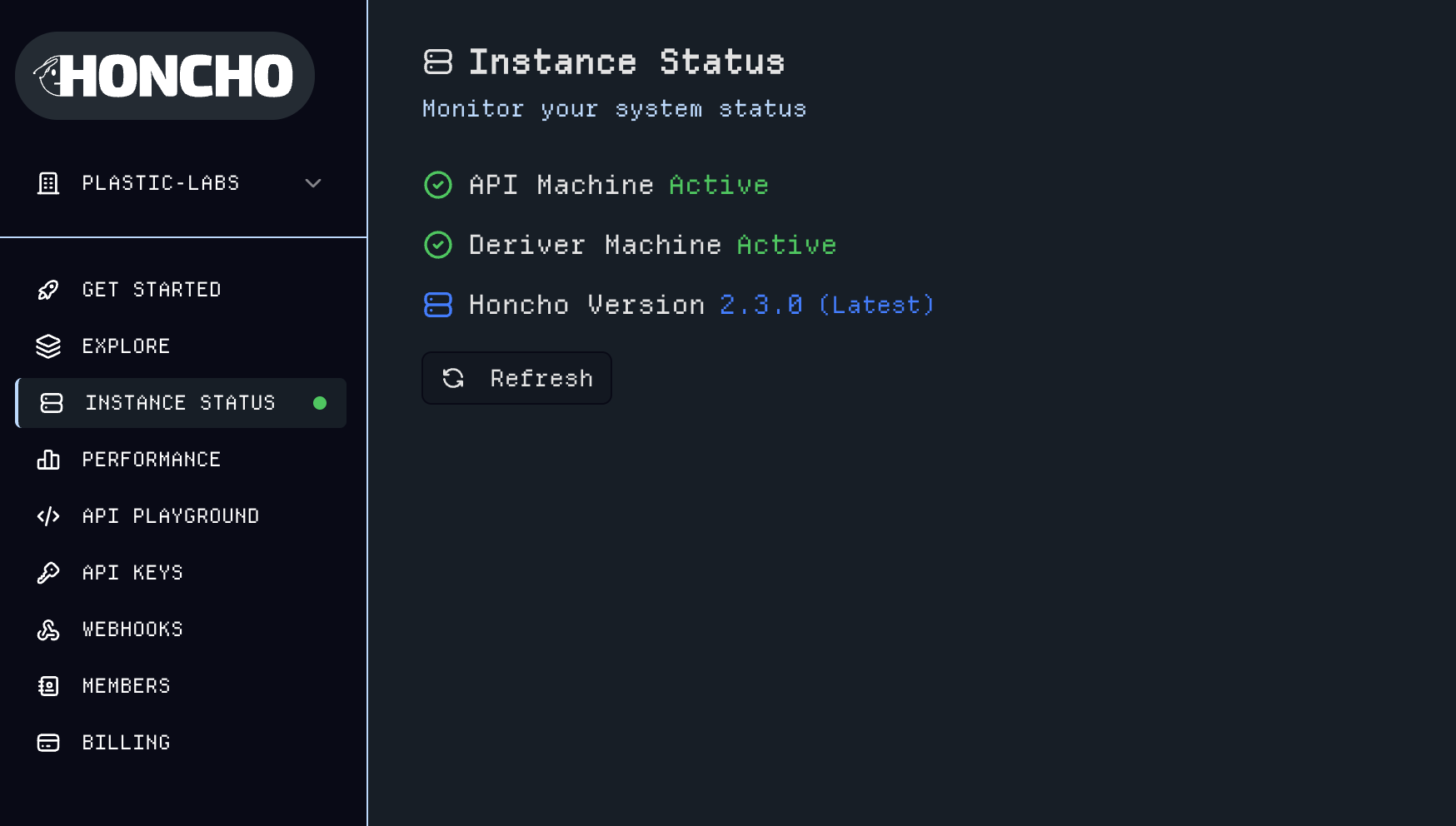

| v2.3.0 | v1.4.0 | v1.4.0 |

| v2.2.0 | v1.3.0 | v1.3.0 |

| v2.1.1 | v1.2.1 | v1.2.2 |

| v2.1.0 | v1.2.1 | v1.2.2 |

| v2.0.5 | v1.1.0 | v1.1.0 |

| v2.0.4 | v1.1.0 | v1.1.0 |

# Changelog

Source: https://docs.honcho.dev/changelog/introduction

Welcome to the Honcho changelog! This section documents all notable changes to the Honcho API and SDKs.

Each release is documented with:

* **Added**: New features and capabilities

* **Changed**: Modifications to existing functionality

* **Deprecated**: Features that will be removed in future versions

* **Removed**: Features that have been removed

* **Fixed**: Bug fixes and corrections

* **Security**: Security-related improvements

## Version Format

Honcho follows [Semantic Versioning](https://semver.org/):

* **MAJOR** version for incompatible API changes

* **MINOR** version for backwards-compatible functionality additions

* **PATCH** version for backwards-compatible bug fixes

### Honcho API and SDK Changelogs

### Fixed

* Langfuse tracing to have readable waterfalls

* Alembic Migrations to match models.py

* message\_in\_seq correctly included in webhook payload

### Changed

* Alembic to always use a session pooler

* Statement timeout during alembic operations to 5 min

### Added

* Alembic migration validation test suite

### Fixed

* Alembic migrations to batch changes

* Batch message creation sequence number

### Changed

* Logging infrastructure to remove noisy messages

* Sentry integration is centralized

### Added

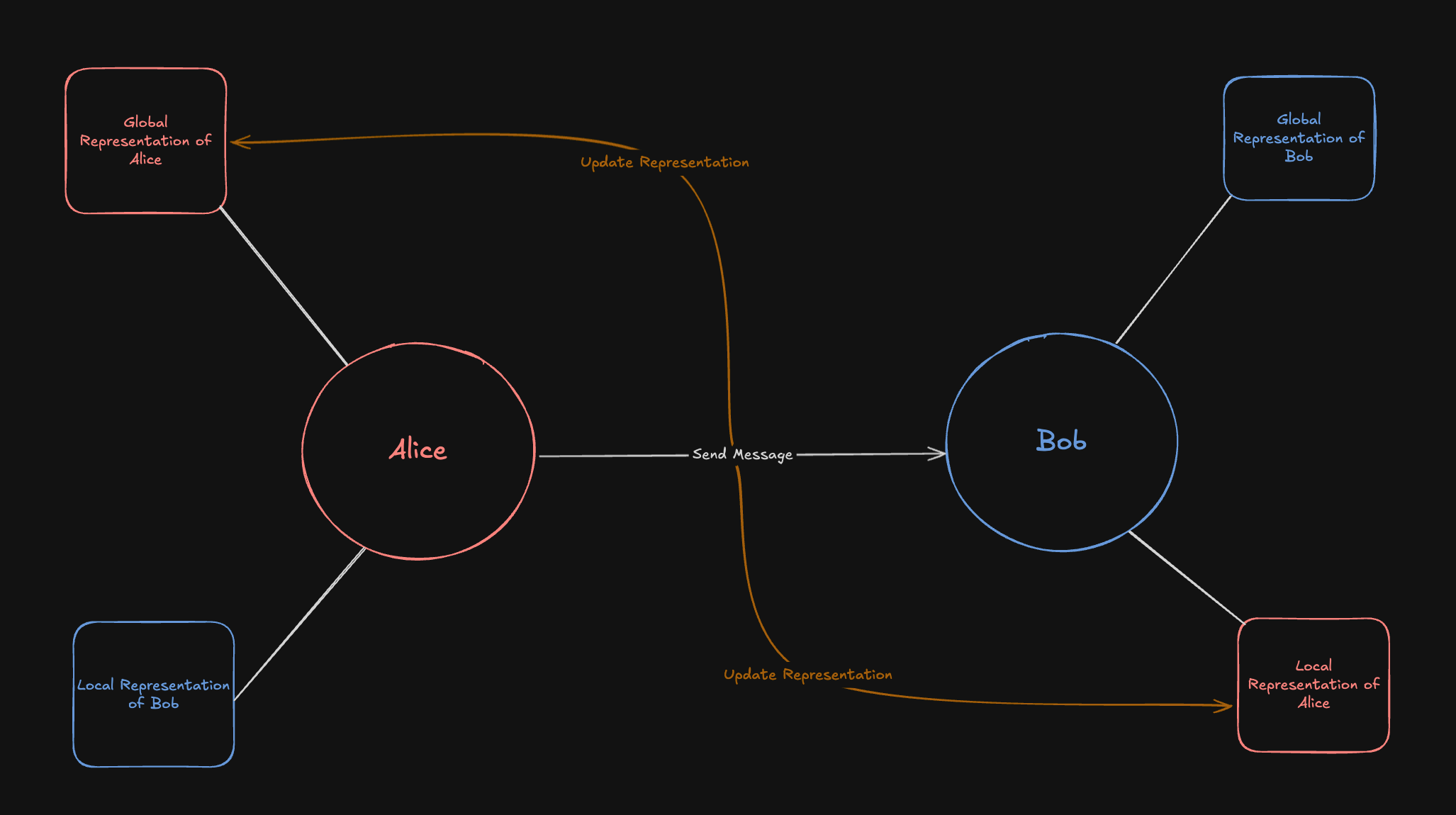

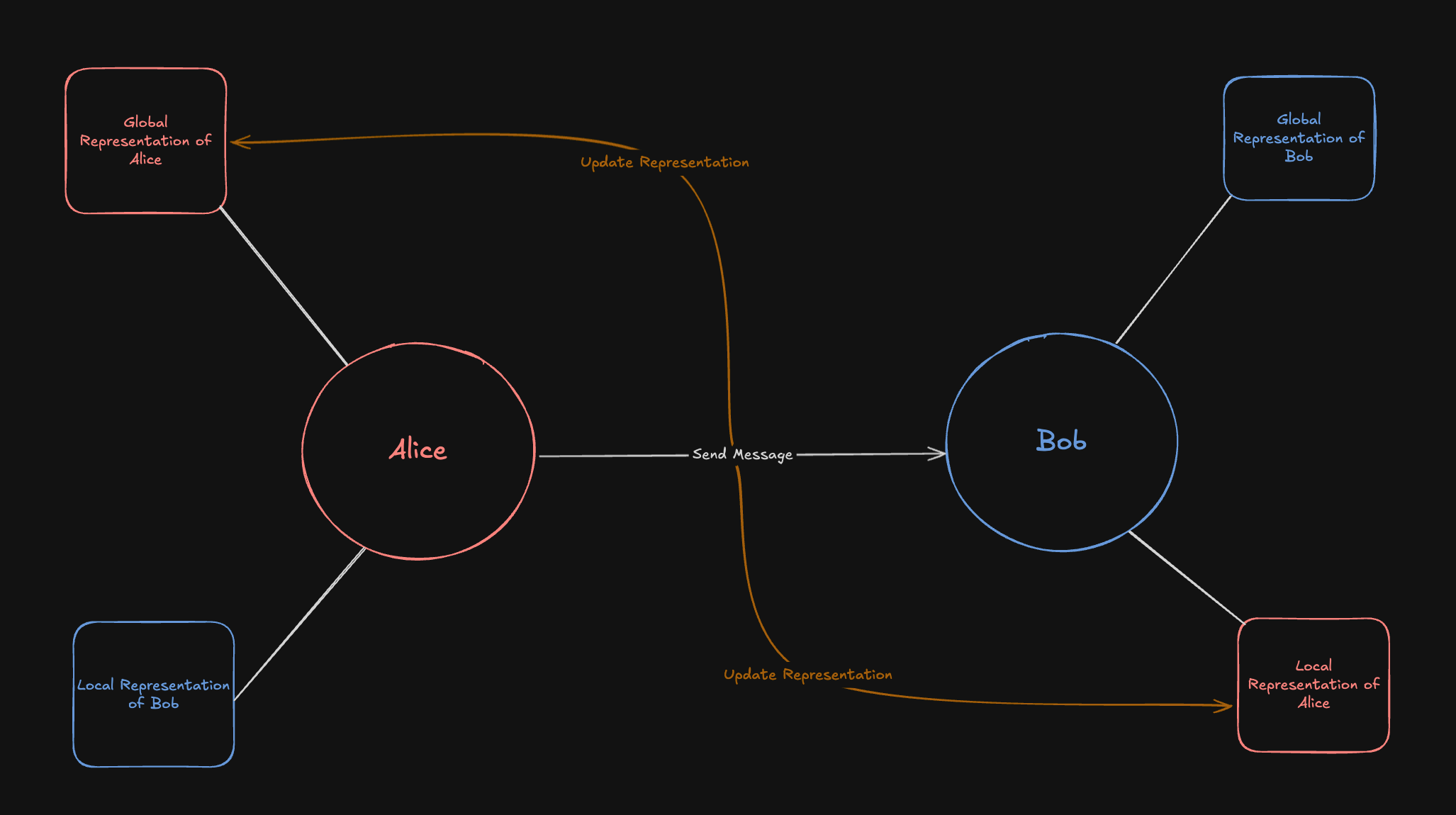

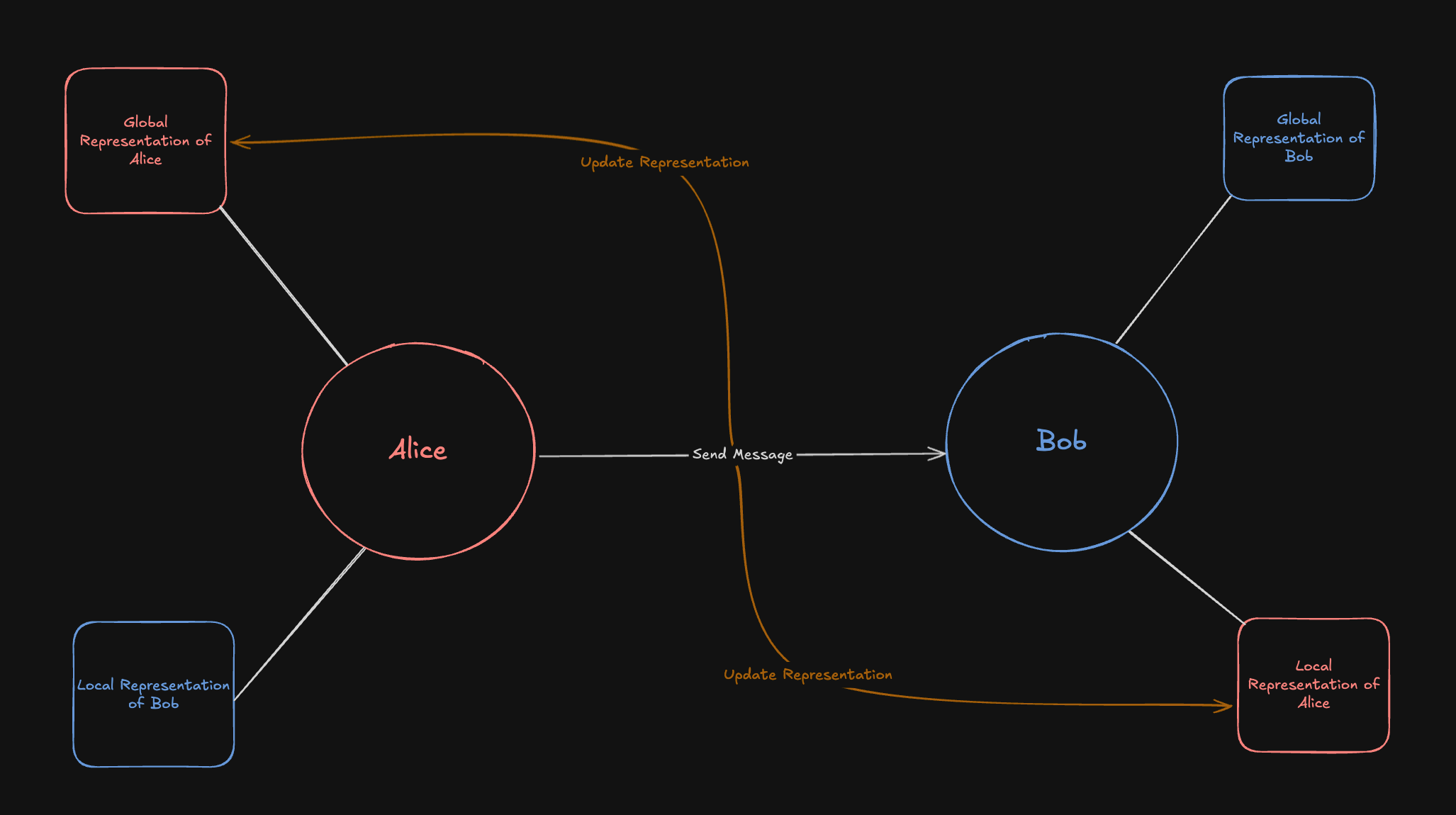

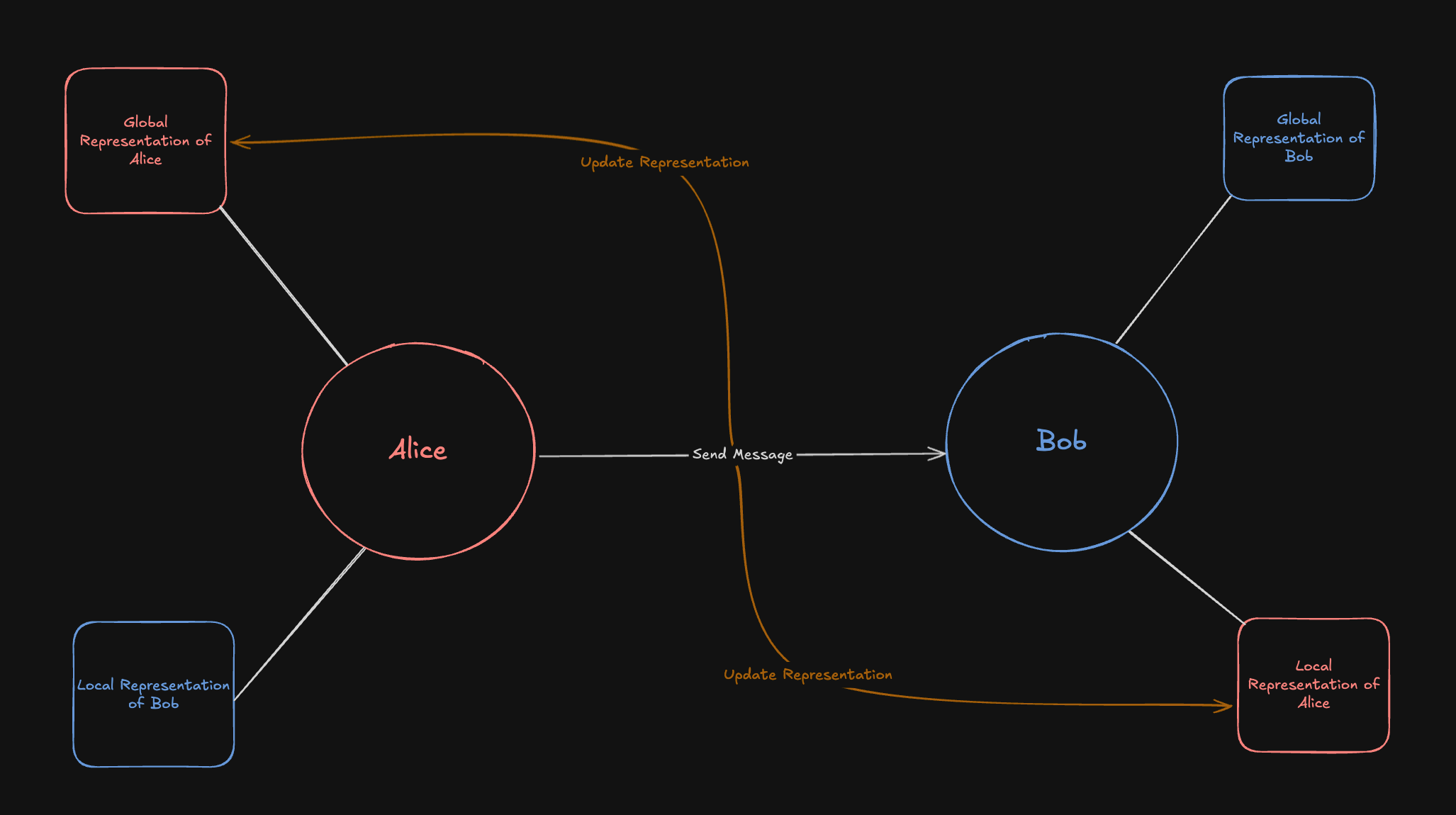

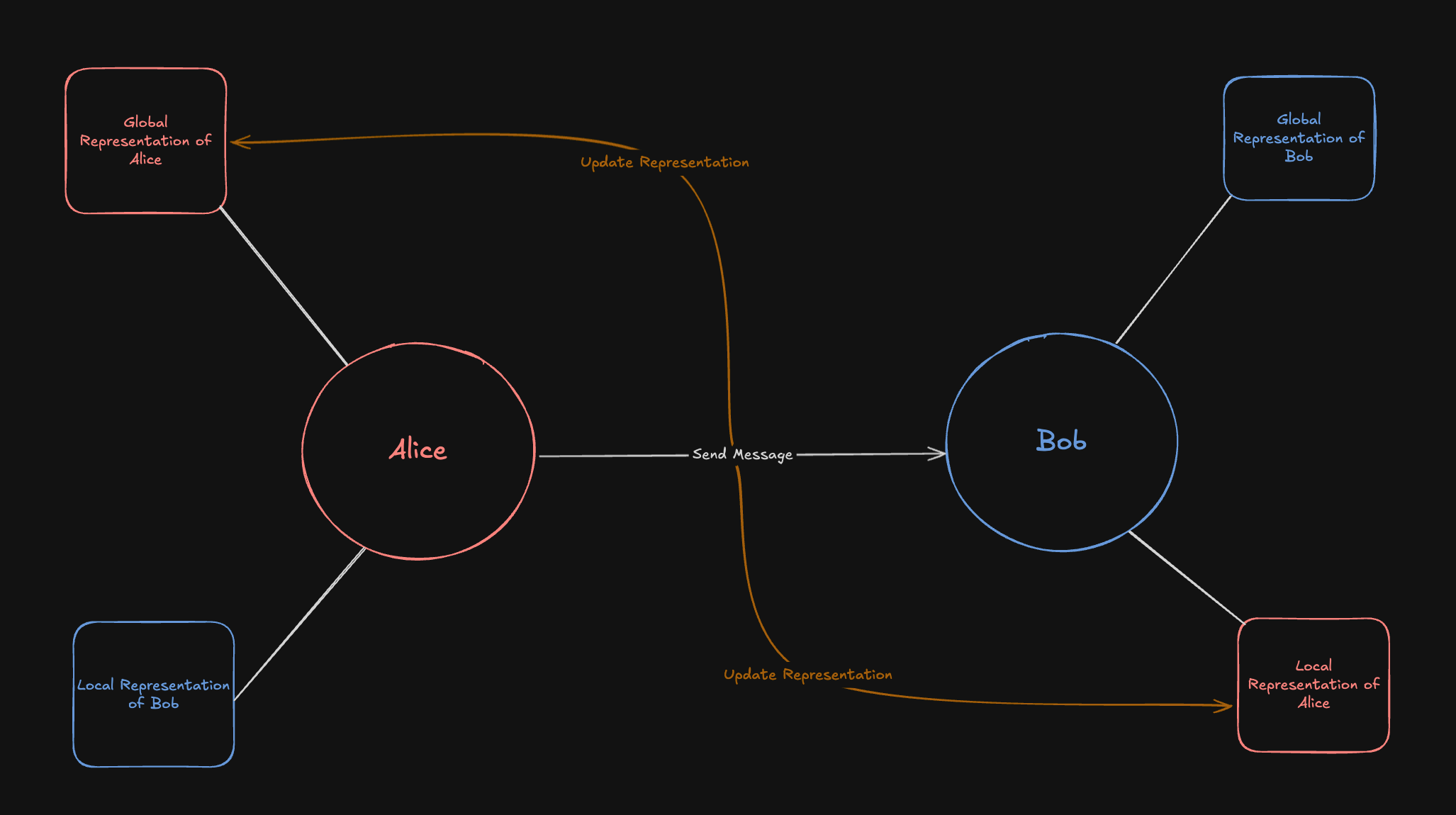

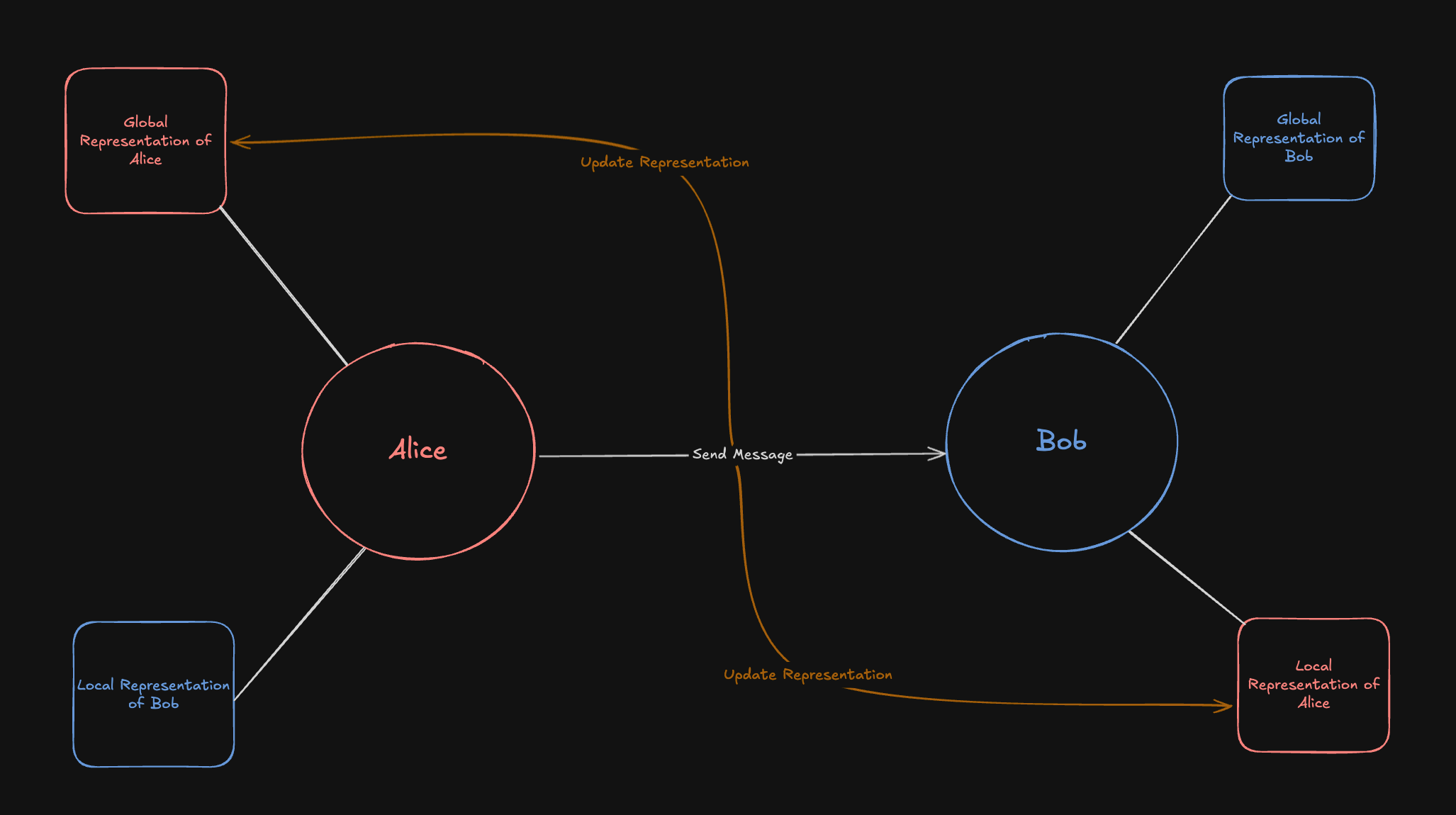

* Unified `Representation` class

* vllm client support

* Periodic queue cleanup logic

* WIP Dreaming Feature

* LongMemEval to Test Bench

* Prometheus Client for better Metrics

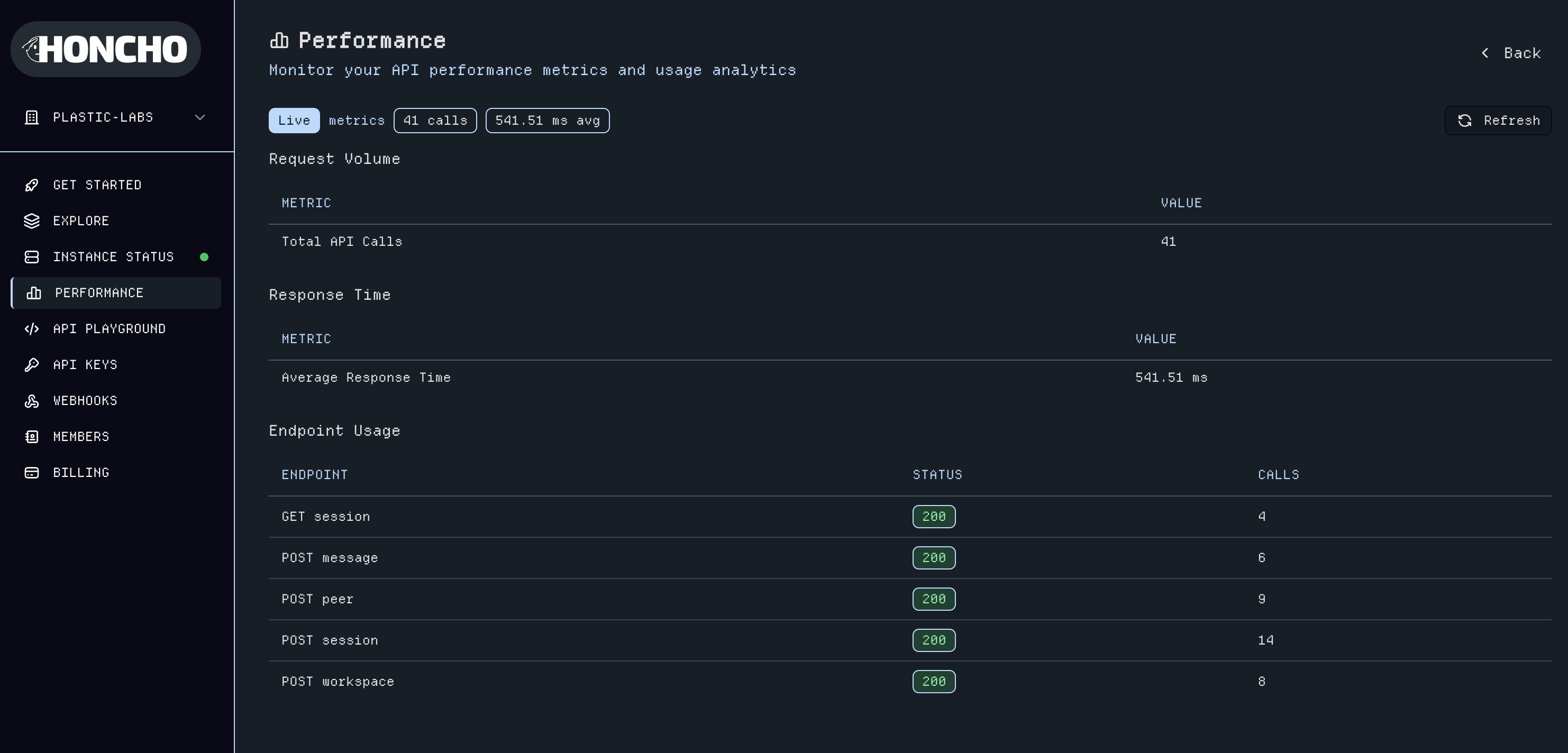

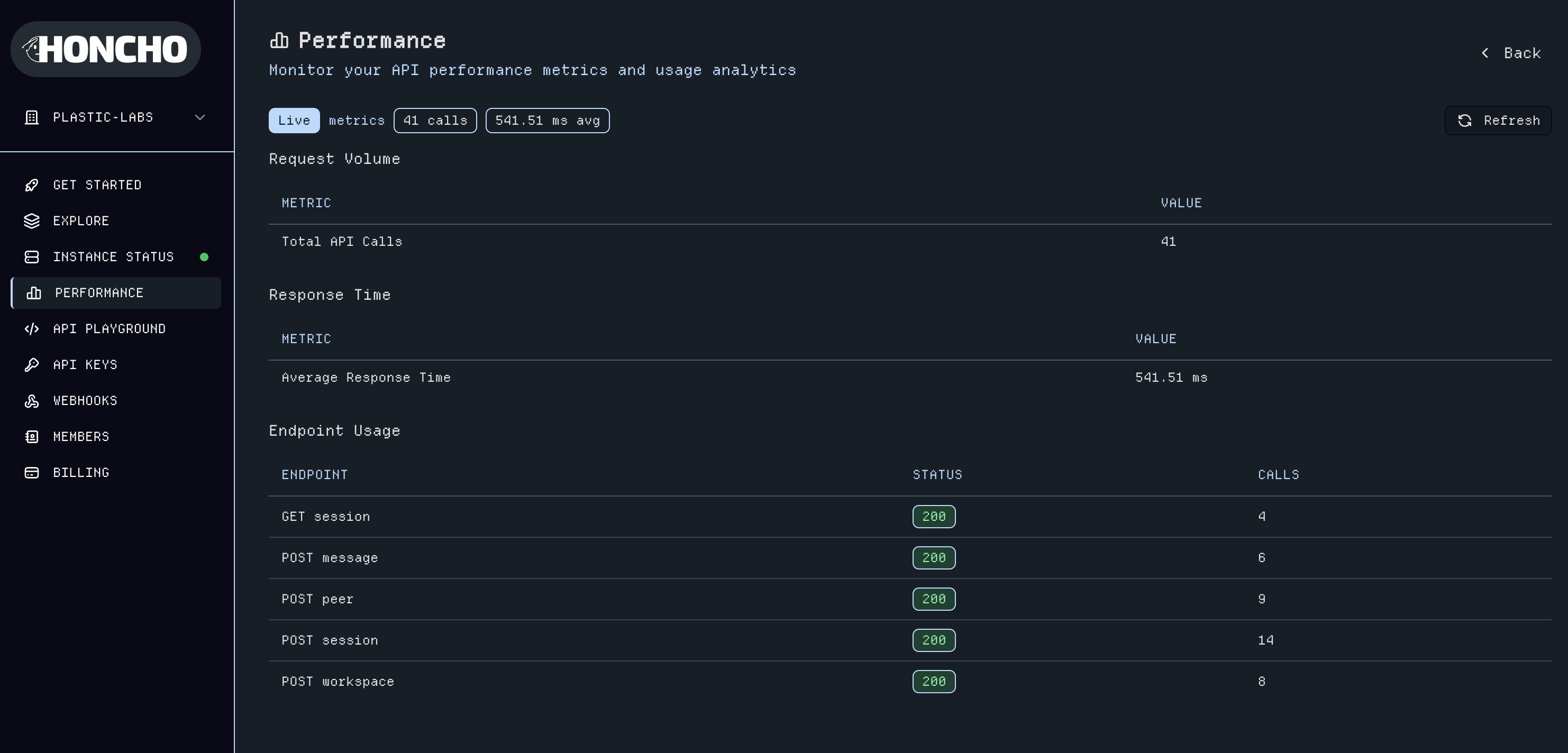

* Performance metrics instrumentation

* Error reporting to deriver

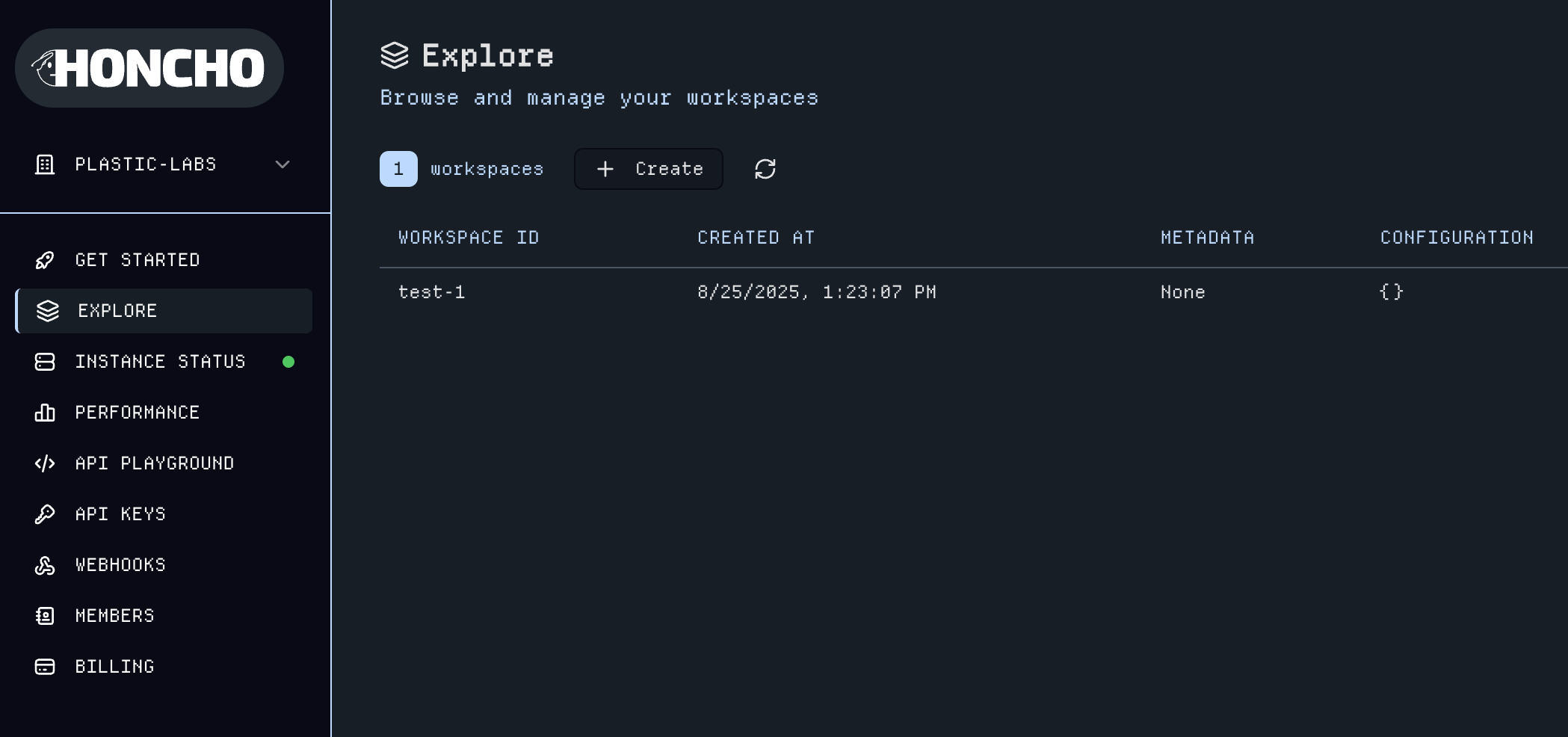

* Workspace Delete Method

* Multi-db option in test harness

### Changed

* Working Representations are Queried on the fly rather than cached in metadata

* EmbeddingStore to RepresentationFactory

* Summary Response Model to use public\_id of message for cutoff

* Semantic across codebase to reference resources based on `observer` and `observed`

* Prompts for Deriver & Dialectic to reference peer\_id and add examples

* `Get Context` route returns peer card and representation in addition to messages and summaries

* Refactoring logger.info calls to logger.debug where applicable

### Fixed

* Gemini client to use async methods

### Changed

* Deriver Rollup Queue processes interleaved messages for more context

### Fixed

* Dialectic Streaming to follow SSE conventions

* Sentry tracing in the deriver

### Added

* Get peer cards endpoint (`GET /v2/peers/{peer_id}/peer-card`) for retrieving targeted peer context information

### Changed

* Replaced Mirascope dependency with small client implementation for better control

* Optimized deriver performance by using joins on messages table instead of storing token count in queue payload

* Database scope optimization for various operations

* Batch representation task processing for \~10x speed improvement in practice

### Fixed

* Separated clean and claim work units in queue manager to prevent race conditions

* Skip locked ActiveQueueSession rows on delete operations

* Langfuse SDK integration updates for compatibility

* Added configurable maximum message size to prevent token overflow in deriver

* Various minor bugfixes

### Fixed

* Added max message count to deriver in order to not overflow token limits

### Added

* `getSummaries` endpoint to get all available summaries for a session directly

* Peer Card feature to improve context for deriver and dialectic

### Changed

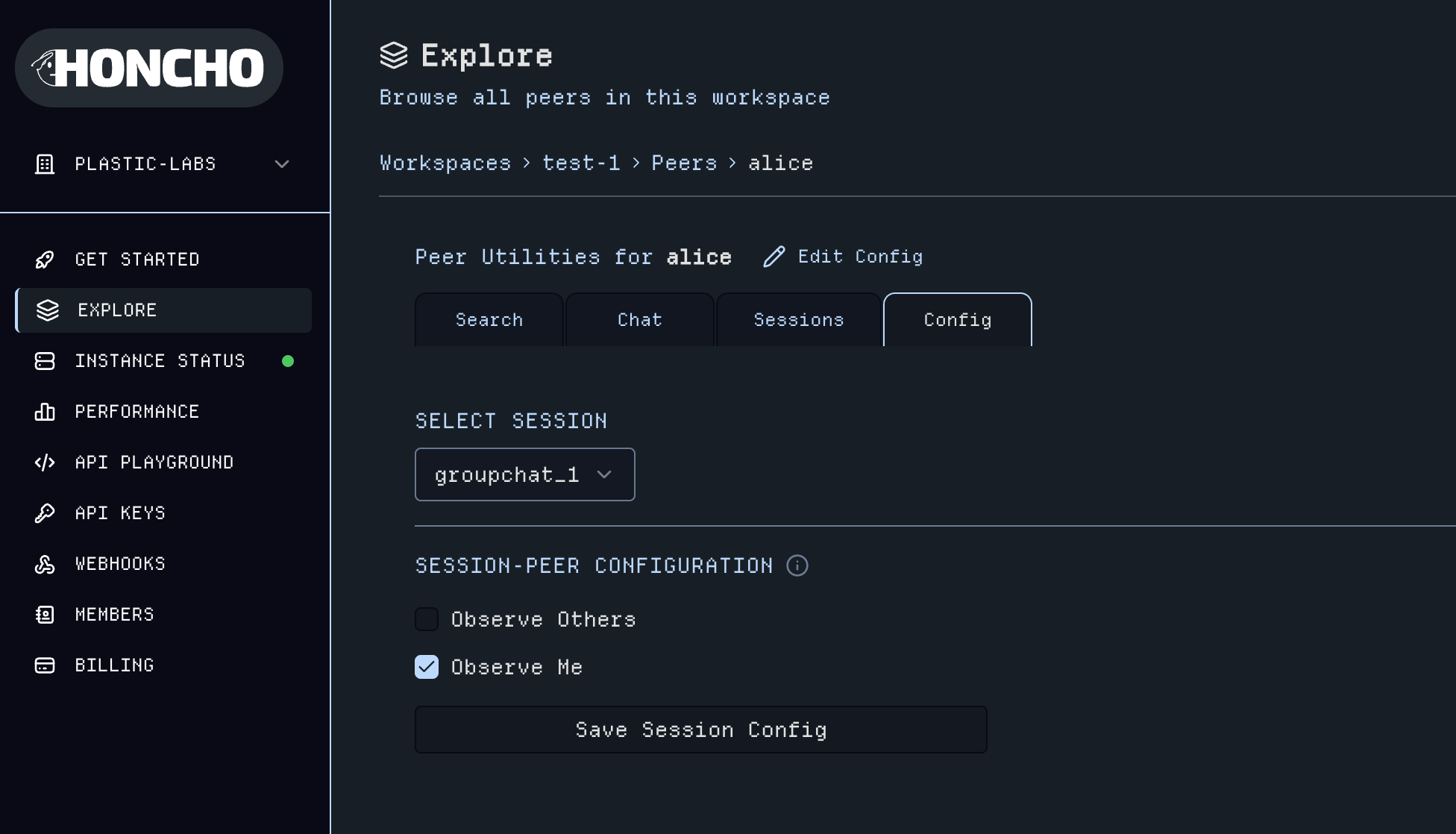

* Session Peer limit to be based on observers instead, renamed config value to

`SESSION_OBSERVERS_LIMIT`

* `Messages` can take a custom timestamp for the `created_at` field, defaulting

to the current time

* `get_context` endpoint returns detailed `Summary` object rather than just

summary content

* Working representations use a FIFO queue structure to maintain facts rather

than a full rewrite

* Optimized deriver enqueue by prefetching message sequence numbers (eliminates N+1 queries)

### Fixed

* Deriver uses `get_context` internally to prevent context window limit errors

* Embedding store will truncate context when querying documents to prevent embedding

token limit errors

* Queue manager to schedule work based on available works rather than total

number of workers

* Queue manager to use atomic db transactions rather than long lived transaction

for the worker lifecycle

* Timestamp formats unified to ISO 8601 across the codebase

* Internal get\_context method's cutoff value is exclusive now

### Added

* Arbitrary filters now available on all search endpoints

* Search combines full-text and semantic using reciprocal rank fusion

* Webhook support (currently only supports queue\_empty and test events, more to come)

* Small test harness and custom test format for evaluating Honcho output quality

* Added MCP server and documentation for it

### Changed

* Search has 10 results by default, max 100 results

* Queue structure generalized to handle more event types

* Summarizer now exhaustive by default and tuned for performance

### Fixed

* Resolve race condition for peers that leave a session while sending messages

* Added explicit rollback to solve integrity error in queue

* Re-introduced Sentry tracing to deriver

* Better integrity logic in get\_or\_create API methods

### Fixed

* Summarizer module to ignore empty summaries and pass appropriate one to get\_context

* Structured Outputs calls with OpenAI provider to pass strict=True to Pydantic Schema

### Added

* Test harness for custom Honcho evaluations

* Better support for session and peer aware dialectic queries

* Langfuse settings

* Added recent history to dialectic prompt, dynamic based on new context window size setting

### Fixed

* Summary queue logic

* Formatting of logs

* Filtering by session

* Peer targeting in queries

### Changed

* Made query expansion in dialectic off by default

* Overhauled logging

* Refactor summarization for performance and code clarity

* Refactor queue payloads for clarity

### Added

* File uploads

* Brand new "ROTE" deriver system

* Updated dialectic system

* Local working representations

* Better logging for deriver/dialectic

* Deriver Queue Status no longer has redundant data

### Fixed

* Document insertion

* Session-scoped and peer-targeted dialectic queries work now

* Minor bugs

### Removed

* Peer-level messages

### Changed

* Dialectic chat endpoint takes a single query

* Rearranged configuration values (LLM, Deriver, Dialectic, History->Summary)

### Fixed

* Groq API client to use the Async library

### Fixed

* Migration/provision scripts did not have correct database connection arguments, causing timeouts

### Fixed

* Bug that causes runtime error when Sentry flags are enabled

### Fixed

* Database initialization was misconfigured and led to provision\_db script failing: switch to consistent working configuration with transaction pooler

### Added

* Ergonomic SDKs for Python and TypeScript (uses Stainless underneath)

* Deriver Queue Status endpoint

* Complex arbitrary filters on workspace/session/peer/message

* Message embedding table for full semantic search

### Changed

* Overhauled documentation

* BasedPyright typing for entire project

* Resource filtering expanded to include logical operators

### Fixed

* Various bugs

* Use new config arrangement everywhere

* Remove hardcoded responses

### Added

* Ability to get a peer's working representation

* Metadata to all data primitives (Workspaces, Peers, Sessions, Messages)

* Internal metadata to store Honcho's state no longer exposed in API

* Batch message operations and enhanced message querying with token and message count limits

* Search and summary functionalities scoped by workspace, peer, and session

* Session context retrieval with summaries and token allocatio

* HNSW Index for Documents Table

* Centralized Configuration via Environment Variables or config.toml file

### Changed

* New architecture centered around the concept of a "peer" replaces the former

"app"/"user"/"session" paradigm

* Workspaces replace "apps" as top-level namespace

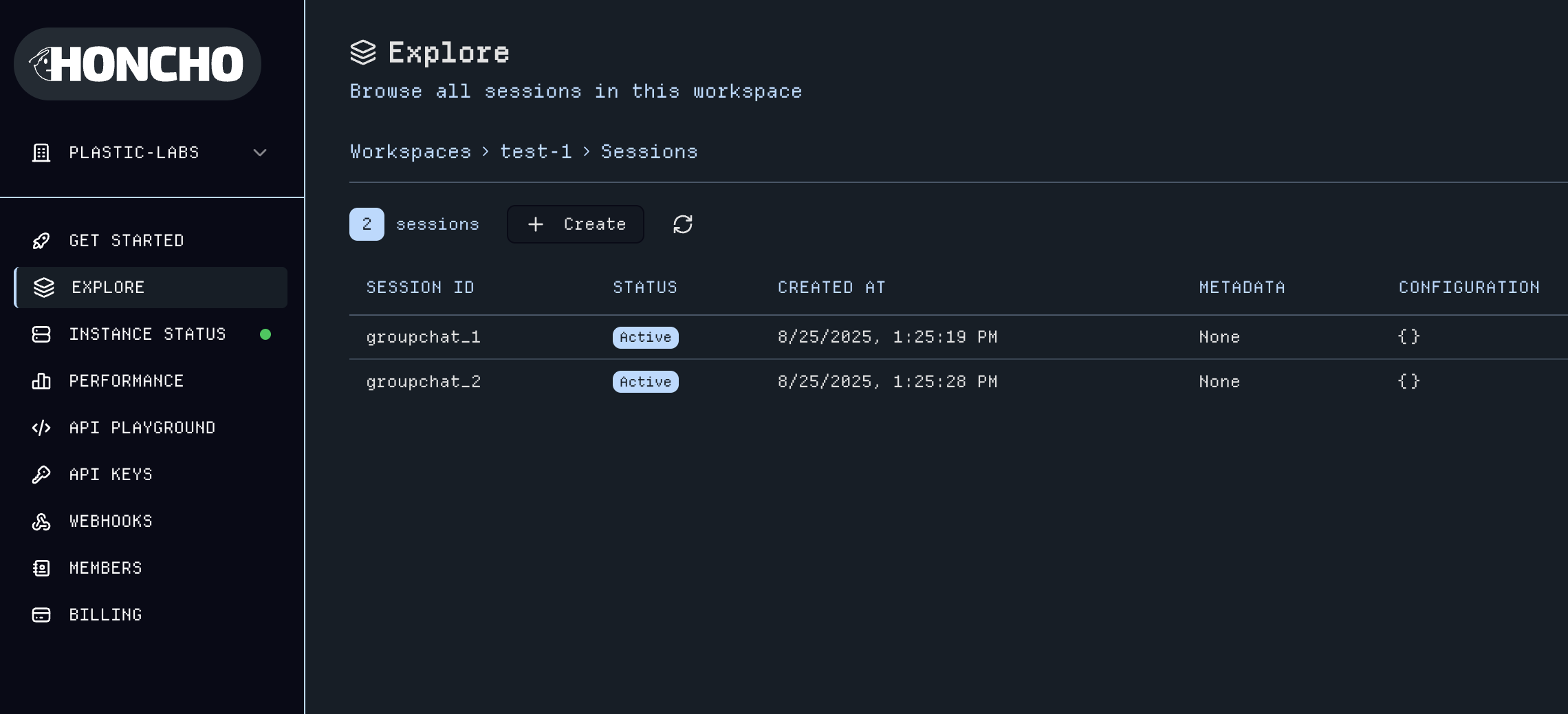

* Peers replace "users"

* Sessions no longer nested beneath peers and no longer limited to a single

user-assistant model. A session exists independently of any one peer and

peers can be added to and removed from sessions.

* Dialectic API is now part of the Peer, not the Session

* Dialectic API now allows queries to be scoped to a session or "targeted"

to a fellow peer

* Database schema migrated to adopt workspace/peer/session naming and structure

* Authentication and JWT scopes updated to workspace/peer/session hierarchy

* Queue processing now works on 'work units' instead of sessions

* Message token counting updated with tiktoken integration and fallback heuristic

* Queue and message processing updated to handle sender/target and task types for multi-peer scenarios

### Fixed

* Improved error handling and validation for batch message operations and metadata

* Database Sessions to be more atomic to reduce idle in transaction time

### Removed

* Metamessages removed in favor of metadata

* Collections and Documents no longer exposed in the API, solely internal

* Obsolete tests for apps, users, collections, documents, and metamessages

***

### Added

* Normalize resources to remove joins and increase query performance

* Query tracing for debugging

### Changed

* `/list` endpoints to not require a request body

* `metamessage_type` to `label` with backwards compatability

* Database Provisioning to rely on alembic

* Database Session Manager to explicitly rollback transactions before closing

the connection

### Fixed

* Alembic Migrations to include initial database migrations

* Sentry Middleware to not report Honcho Exceptions

### Added

* JWT based API authentication

* Configurable logging

* Consolidated LLM Inference via `ModelClient` class

* Dynamic logging configurable via environment variables

### Changed

* Deriver & Dialectic API to use Hybrid Memory Architecture

* Metamessages are not strictly tied to a message

* Database provisioning is a separate script instead of happening on startup

* Consolidated `session/chat` and `session/chat/stream` endpoints

## Previous Releases

For a complete history of all releases, see our [GitHub Releases](https://github.com/plastic-labs/honcho/tags) page.

[Python SDK](https://pypi.org/project/honcho-ai/)

### Added

* Delete workspace method

### Changed

* message\_id of `Summary` model is a string nanoid

* Get Context can return Peer Card & Peer Representation

### Added

* Get Peer Card method

* Update Message metadata method

* Session level deriver status methods

* Delete session message

### Fixed

* Dialectic Stream returns Iterators

* Type warnings

### Changed

* Pagination class to match core implementation

### Added

* getSummaries API returning structured summaries

* Webhook support

### Changed

* Messages can take an optional `created_at` value, defaulting to the current

time (UTC ISO 8601)

### Added

* Filter parameter to various endpoints

### Fixed

* Honcho util import paths

### Added

* Get/poll deriver queue status endpoints added to workspace

* Added endpoint to upload files as messages

### Removed

* Removed peer messages in accordance with Honcho 2.1.0

### Changed

* Updated chat endpoint to use singular `query` in accordance with Honcho 2.1.0

### Fixed

* Properly handle AsyncClient

[TypeScript SDK](https://www.npmjs.com/package/@honcho-ai/sdk)

### Added

* Delete workspace method

### Changed

* message\_id of `Summary` model is a string nanoid

* Get Context can return Peer Card & Peer Representation

### Added

* Get Peer Card method

* Update Message metadata method

* Session level deriver status methods

* Delete session message

### Fixed

* Dialectic Stream returns Iterators

* Type warnings

### Changed

* Pagination class to match core implementation

### Added

* getSummaries API returning structured summaries

* Webhook support

### Changed

* Messages can take an optional `created_at` value, defaulting to the current

time (UTC ISO 8601)

### Added

* linting via Biome

* Adding filter parameter to various endpoints

### Fixed

* Order of parameters in `getSessions` endpoint

### Added

* Get/poll deriver queue status endpoints added to workspace

* Added endpoint to upload files as messages

### Removed

* Removed peer messages in accordance with Honcho 2.1.0

### Changed

* Updated chat endpoint to use singular `query` in accordance with Honcho 2.1.0

### Fixed

* Create default workspace on Honcho client instantiation

* Simplified Honcho client import path

## Getting Help

If you encounter issues using the Honcho API or its SDKs:

1. Open an issue on [GitHub](https://github.com/plastic-labs/honcho/issues)

2. Join our [Discord community](http://discord.gg/plasticlabs) for support

# Create Key

Source: https://docs.honcho.dev/v2/api-reference/endpoint/keys/create-key

post /v2/keys

Create a new Key

# Create Messages For Session

Source: https://docs.honcho.dev/v2/api-reference/endpoint/messages/create-messages-for-session

post /v2/workspaces/{workspace_id}/sessions/{session_id}/messages/

Create messages for a session with JSON data (original functionality).

# Create Messages With File

Source: https://docs.honcho.dev/v2/api-reference/endpoint/messages/create-messages-with-file

post /v2/workspaces/{workspace_id}/sessions/{session_id}/messages/upload

Create messages from uploaded files. Files are converted to text and split into multiple messages.

# Get Message

Source: https://docs.honcho.dev/v2/api-reference/endpoint/messages/get-message

get /v2/workspaces/{workspace_id}/sessions/{session_id}/messages/{message_id}

Get a Message by ID

# Get Messages

Source: https://docs.honcho.dev/v2/api-reference/endpoint/messages/get-messages

post /v2/workspaces/{workspace_id}/sessions/{session_id}/messages/list

Get all messages for a session

# Update Message

Source: https://docs.honcho.dev/v2/api-reference/endpoint/messages/update-message

put /v2/workspaces/{workspace_id}/sessions/{session_id}/messages/{message_id}

Update the metadata of a Message

# Metrics

Source: https://docs.honcho.dev/v2/api-reference/endpoint/metrics

get /metrics

Prometheus metrics endpoint

# Chat

Source: https://docs.honcho.dev/v2/api-reference/endpoint/peers/chat

post /v2/workspaces/{workspace_id}/peers/{peer_id}/chat

# Get Or Create Peer

Source: https://docs.honcho.dev/v2/api-reference/endpoint/peers/get-or-create-peer

post /v2/workspaces/{workspace_id}/peers

Get a Peer by ID

If peer_id is provided as a query parameter, it uses that (must match JWT workspace_id).

Otherwise, it uses the peer_id from the JWT.

# Get Peer Card

Source: https://docs.honcho.dev/v2/api-reference/endpoint/peers/get-peer-card

get /v2/workspaces/{workspace_id}/peers/{peer_id}/card

Get a peer card for a specific peer relationship.

Returns the peer card that the observer peer has for the target peer if it exists.

If no target is specified, returns the observer's own peer card.

# Get Peers

Source: https://docs.honcho.dev/v2/api-reference/endpoint/peers/get-peers

post /v2/workspaces/{workspace_id}/peers/list

Get All Peers for a Workspace

# Get Sessions For Peer

Source: https://docs.honcho.dev/v2/api-reference/endpoint/peers/get-sessions-for-peer

post /v2/workspaces/{workspace_id}/peers/{peer_id}/sessions

Get All Sessions for a Peer

# Get Working Representation

Source: https://docs.honcho.dev/v2/api-reference/endpoint/peers/get-working-representation

post /v2/workspaces/{workspace_id}/peers/{peer_id}/representation

Get a peer's working representation for a session.

If a session_id is provided in the body, we get the working representation of the peer in that session.

If a target is provided, we get the representation of the target from the perspective of the peer.

If no target is provided, we get the omniscient Honcho representation of the peer.

# Search Peer

Source: https://docs.honcho.dev/v2/api-reference/endpoint/peers/search-peer

post /v2/workspaces/{workspace_id}/peers/{peer_id}/search

Search a Peer

# Update Peer

Source: https://docs.honcho.dev/v2/api-reference/endpoint/peers/update-peer

put /v2/workspaces/{workspace_id}/peers/{peer_id}

Update a Peer's name and/or metadata

# Add Peers To Session

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/add-peers-to-session

post /v2/workspaces/{workspace_id}/sessions/{session_id}/peers

Add peers to a session

# Clone Session

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/clone-session

get /v2/workspaces/{workspace_id}/sessions/{session_id}/clone

Clone a session, optionally up to a specific message

# Delete Session

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/delete-session

delete /v2/workspaces/{workspace_id}/sessions/{session_id}

Delete a session by marking it as inactive

# Get Or Create Session

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/get-or-create-session

post /v2/workspaces/{workspace_id}/sessions

Get a specific session in a workspace.

If session_id is provided as a query parameter, it verifies the session is in the workspace.

Otherwise, it uses the session_id from the JWT for verification.

# Get Peer Config

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/get-peer-config

get /v2/workspaces/{workspace_id}/sessions/{session_id}/peers/{peer_id}/config

Get the configuration for a peer in a session

# Get Session Context

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/get-session-context

get /v2/workspaces/{workspace_id}/sessions/{session_id}/context

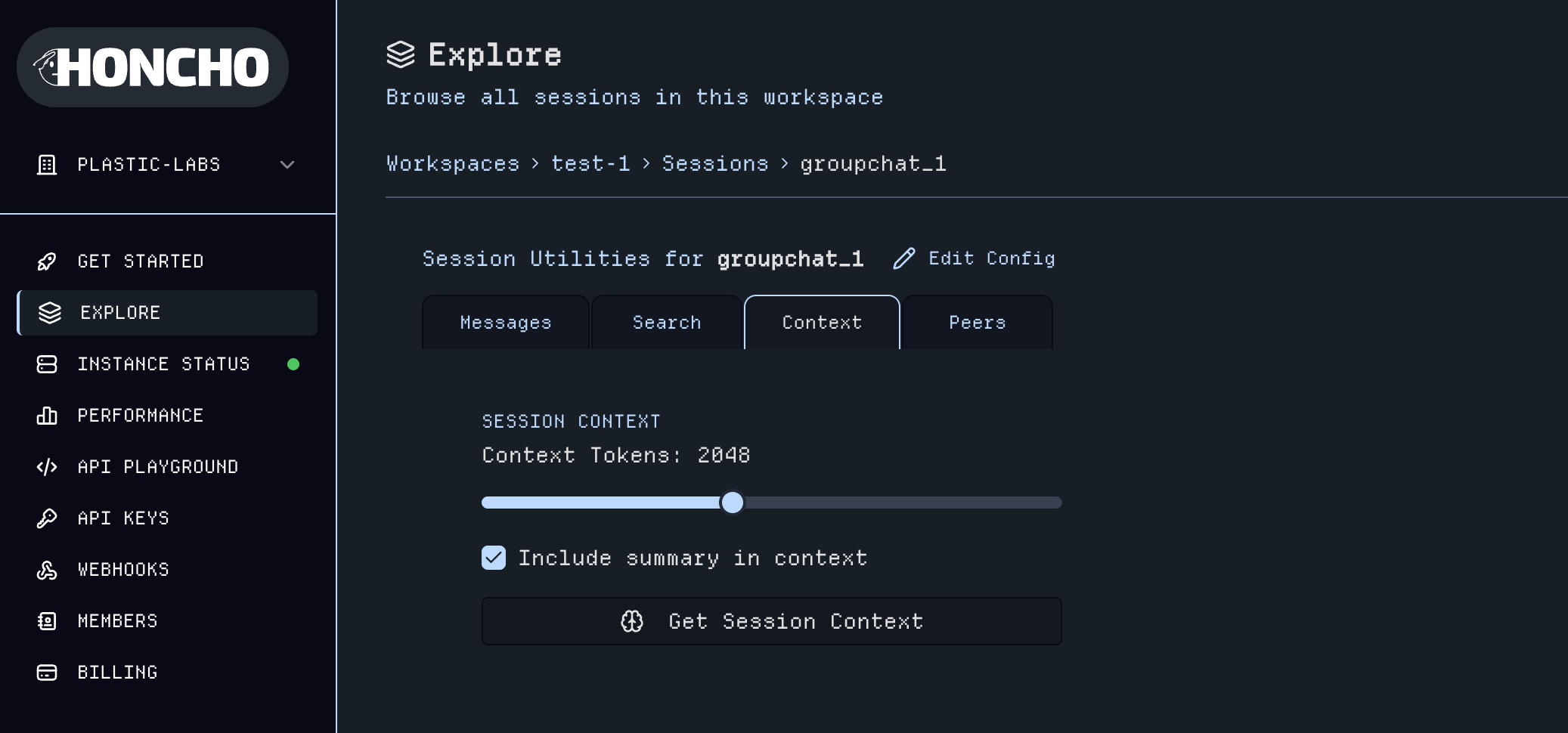

Produce a context object from the session. The caller provides an optional token limit which the entire context must fit into.

If not provided, the context will be exhaustive (within configured max tokens). To do this, we allocate 40% of the token limit

to the summary, and 60% to recent messages -- as many as can fit. Note that the summary will usually take up less space than

this. If the caller does not want a summary, we allocate all the tokens to recent messages.

# Get Session Peers

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/get-session-peers

get /v2/workspaces/{workspace_id}/sessions/{session_id}/peers

Get peers from a session

# Get Session Summaries

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/get-session-summaries

get /v2/workspaces/{workspace_id}/sessions/{session_id}/summaries

Get available summaries for a session.

Returns both short and long summaries if available, including metadata like

the message ID they cover up to, creation timestamp, and token count.

# Get Sessions

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/get-sessions

post /v2/workspaces/{workspace_id}/sessions/list

Get All Sessions in a Workspace

# Remove Peers From Session

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/remove-peers-from-session

delete /v2/workspaces/{workspace_id}/sessions/{session_id}/peers

Remove peers from a session

# Search Session

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/search-session

post /v2/workspaces/{workspace_id}/sessions/{session_id}/search

Search a Session

# Set Peer Config

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/set-peer-config

post /v2/workspaces/{workspace_id}/sessions/{session_id}/peers/{peer_id}/config

Set the configuration for a peer in a session

# Set Session Peers

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/set-session-peers

put /v2/workspaces/{workspace_id}/sessions/{session_id}/peers

Set the peers in a session

# Update Session

Source: https://docs.honcho.dev/v2/api-reference/endpoint/sessions/update-session

put /v2/workspaces/{workspace_id}/sessions/{session_id}

Update the metadata of a Session

# Delete Webhook Endpoint

Source: https://docs.honcho.dev/v2/api-reference/endpoint/webhooks/delete-webhook-endpoint

delete /v2/workspaces/{workspace_id}/webhooks/{endpoint_id}

Delete a specific webhook endpoint.

# Get Or Create Webhook Endpoint

Source: https://docs.honcho.dev/v2/api-reference/endpoint/webhooks/get-or-create-webhook-endpoint

post /v2/workspaces/{workspace_id}/webhooks

Get or create a webhook endpoint URL.

# List Webhook Endpoints

Source: https://docs.honcho.dev/v2/api-reference/endpoint/webhooks/list-webhook-endpoints

get /v2/workspaces/{workspace_id}/webhooks

List all webhook endpoints, optionally filtered by workspace.

# Test Emit

Source: https://docs.honcho.dev/v2/api-reference/endpoint/webhooks/test-emit

get /v2/workspaces/{workspace_id}/webhooks/test

Test publishing a webhook event.

# Delete Workspace

Source: https://docs.honcho.dev/v2/api-reference/endpoint/workspaces/delete-workspace

delete /v2/workspaces/{workspace_id}

Delete a Workspace

# Get All Workspaces

Source: https://docs.honcho.dev/v2/api-reference/endpoint/workspaces/get-all-workspaces

post /v2/workspaces/list

Get all Workspaces

# Get Deriver Status

Source: https://docs.honcho.dev/v2/api-reference/endpoint/workspaces/get-deriver-status

get /v2/workspaces/{workspace_id}/deriver/status

Get the deriver processing status, optionally scoped to an observer, sender, and/or session

# Get Or Create Workspace

Source: https://docs.honcho.dev/v2/api-reference/endpoint/workspaces/get-or-create-workspace

post /v2/workspaces

Get a Workspace by ID.

If workspace_id is provided as a query parameter, it uses that (must match JWT workspace_id).

Otherwise, it uses the workspace_id from the JWT.

# Search Workspace

Source: https://docs.honcho.dev/v2/api-reference/endpoint/workspaces/search-workspace

post /v2/workspaces/{workspace_id}/search

Search a Workspace

# Update Workspace

Source: https://docs.honcho.dev/v2/api-reference/endpoint/workspaces/update-workspace

put /v2/workspaces/{workspace_id}

Update a Workspace

# Introduction

Source: https://docs.honcho.dev/v2/api-reference/introduction

This section documents all available API endpoints in the Honcho Server. Each

endpoint provides CRUD operations for our core primitives. For information

about these primitives, see

[Architecture](/v2/documentation/core-concepts/architecture).

We strongly recommend using our official SDKs instead of calling these APIs directly. The SDKs provide better error handling, type safety, and developer experience.

## Recommended approach

Use our official SDKs for the best development experience:

* [Python SDK](https://pypi.org/project/honcho-ai/)

* [TypeScript SDK](https://www.npmjs.com/package/@honcho-ai/sdk)

## When to use this API reference

This reference is primarily useful for:

* Debugging SDK behavior

* Building integrations in unsupported languages

* Understanding the underlying data structures

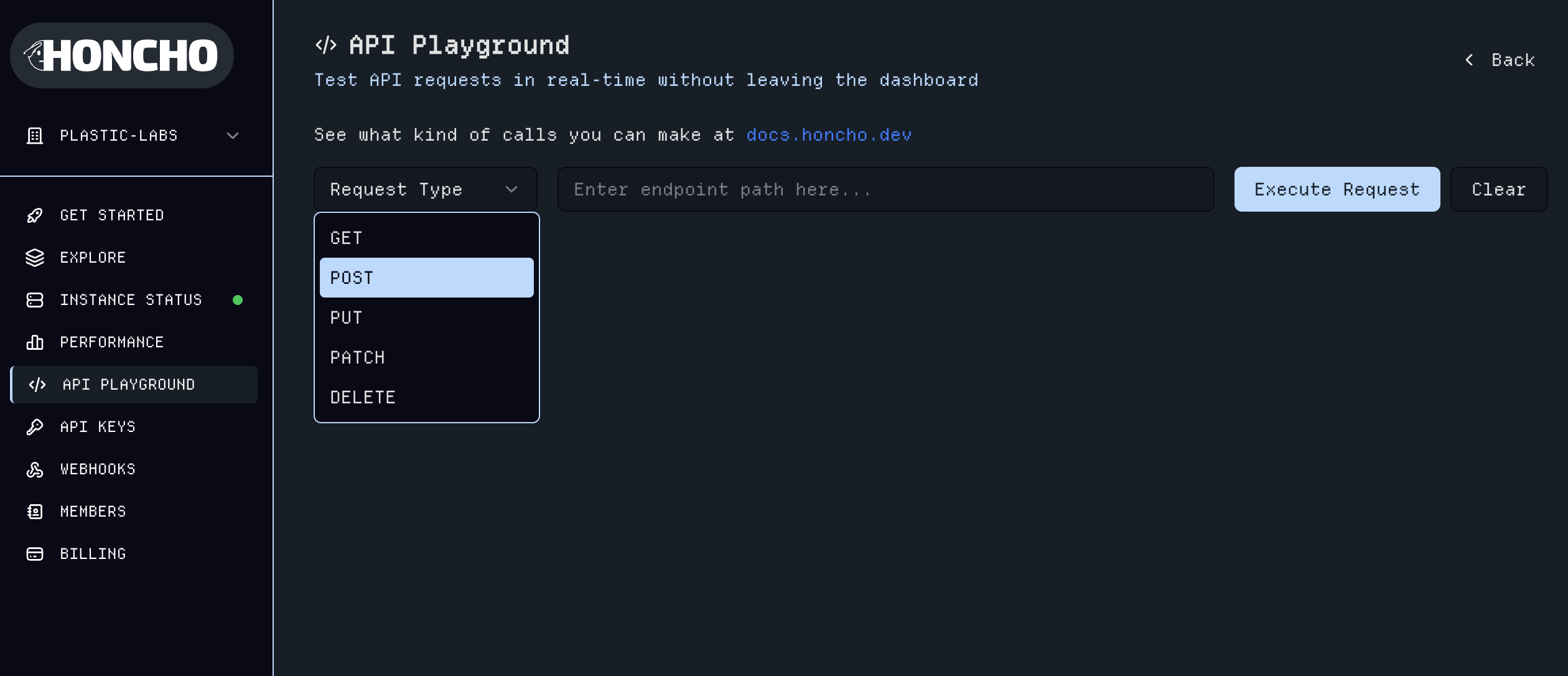

The endpoints pages are autogenerated and include interactive examples for testing.

# Configuration Guide

Source: https://docs.honcho.dev/v2/contributing/configuration

Complete guide to configuring Honcho for development and production

Honcho uses a flexible configuration system that supports both TOML files and environment variables. Configuration values are loaded in the following priority order (highest to lowest):

1. Environment variables (always take precedence)

2. `.env` file (for local development)

3. `config.toml` file (base configuration)

4. Default values

## Recommended Configuration Approaches

### Option 1: Environment Variables Only (Production)

* Use environment variables for all configuration

* No config files needed

* Ideal for containerized deployments (Docker, Kubernetes)

* Secrets managed by your deployment platform

### Option 2: config.toml (Development/Simple Deployments)

* Use config.toml for base configuration

* Override sensitive values with environment variables

* Good for development and simple deployments

### Option 3: Hybrid Approach

* Use config.toml for non-sensitive base settings

* Use .env file for sensitive values (API keys, secrets)

* Good for development teams

### Option 4: .env Only (Local Development)

* Use .env file for all configuration

* Simple for local development

* Never commit .env files to version control

## Configuration Methods

### Using config.toml

Copy the example configuration file to get started:

```bash theme={null}

cp config.toml.example config.toml

```

Then modify the values as needed. The TOML file is organized into sections:

* `[app]` - Application-level settings (log level, session limits, embedding settings, Langfuse integration, local metrics collection)

* `[db]` - Database connection and pool settings (connection URI, pool size, timeouts, connection recycling)

* `[auth]` - Authentication configuration (enable/disable auth, JWT secret)

* `[cache]` - Redis cache configuration (enable/disable caching, Redis URL, TTL settings, lock configuration for cache stampede prevention)

* `[llm]` - LLM provider API keys (Anthropic, OpenAI, Gemini, Groq, OpenAI-compatible endpoints) and general LLM settings

* `[dialectic]` - Dialectic API configuration (provider, model, query generation settings, semantic search parameters, context window size)

* `[deriver]` - Background worker settings (worker count, polling intervals, queue management) and theory of mind configuration (model, tokens, observation limits)

* `[peer_card]` - Peer card generation settings (provider, model, token limits)

* `[summary]` - Session summarization settings (frequency thresholds, provider, model, token limits for short and long summaries)

* `[dream]` - Dream processing configuration (enable/disable, thresholds, idle timeouts, dream types, LLM settings)

* `[webhook]` - Webhook configuration (webhook secret, workspace limits)

* `[metrics]` - Metrics collection settings (enable/disable metrics, namespace)

* `[sentry]` - Error tracking and monitoring settings (enable/disable, DSN, environment, sample rates)

### Using Environment Variables

All configuration values can be overridden using environment variables. The environment variable names follow this pattern:

* `{SECTION}_{KEY}` for nested settings

* Just `{KEY}` for app-level settings

Examples:

* `DB_CONNECTION_URI` → `[db].CONNECTION_URI`

* `DB_POOL_SIZE` → `[db].POOL_SIZE`

* `AUTH_JWT_SECRET` → `[auth].JWT_SECRET`

* `DIALECTIC_MODEL` → `[dialectic].MODEL`

* `LOG_LEVEL` (no section) → `[app].LOG_LEVEL`

### Configuration Priority

When a configuration value is set in multiple places, Honcho uses this priority:

1. **Environment variables** - Always take precedence

2. **.env file** - Loaded for local development

3. **config.toml** - Base configuration

4. **Default values** - Built-in defaults

This allows you to:

* Use `config.toml` for base configuration

* Override specific values with environment variables in production

* Use `.env` files for local development without modifying config.toml

### Example

If you have this in `config.toml`:

```toml theme={null}

[db]

CONNECTION_URI = "postgresql://localhost/honcho_dev"

POOL_SIZE = 10

```

You can override just the connection URI in production:

```bash theme={null}

export DB_CONNECTION_URI="postgresql://prod-server/honcho_prod"

```

The application will use the production connection URI while keeping the pool size from config.toml.

## Core Configuration

### Application Settings

Application-level settings control core behavior of the Honcho server including logging, session limits, message handling, and optional integrations.

**Basic Application Configuration:**

```bash theme={null}

# Logging and server settings

LOG_LEVEL=INFO # DEBUG, INFO, WARNING, ERROR, CRITICAL

# Session and context limits

SESSION_OBSERVERS_LIMIT=10 # Maximum number of observers per session

GET_CONTEXT_MAX_TOKENS=100000 # Maximum tokens for context retrieval

MAX_MESSAGE_SIZE=25000 # Maximum message size in characters

# Embedding settings

EMBED_MESSAGES=true # Enable vector embeddings for messages

MAX_EMBEDDING_TOKENS=8192 # Maximum tokens per embedding

MAX_EMBEDDING_TOKENS_PER_REQUEST=300000 # Batch embedding limit

```

**Optional Integrations:**

```bash theme={null}

# Langfuse integration for LLM observability

LANGFUSE_HOST=https://cloud.langfuse.com

LANGFUSE_PUBLIC_KEY=your-langfuse-public-key

# Local metrics collection

COLLECT_METRICS_LOCAL=false

LOCAL_METRICS_FILE=metrics.jsonl

```

### Database Configuration

**Required Database Settings:**

```bash theme={null}

# PostgreSQL connection string (required)

DB_CONNECTION_URI=postgresql+psycopg://username:password@host:port/database

# Example for local development

DB_CONNECTION_URI=postgresql+psycopg://postgres:postgres@localhost:5432/honcho

# Example for production

DB_CONNECTION_URI=postgresql+psycopg://honcho_user:secure_password@db.example.com:5432/honcho_prod

```

**Database Pool Settings:**

```bash theme={null}

# Connection pool configuration

DB_SCHEMA=public

DB_POOL_SIZE=10

DB_MAX_OVERFLOW=20

DB_POOL_TIMEOUT=30

DB_POOL_RECYCLE=300

DB_POOL_PRE_PING=true

DB_SQL_DEBUG=false

DB_TRACING=false

```

**Docker Compose for PostgreSQL:**

```yaml theme={null}

# docker-compose.yml

version: '3.8'

services:

database:

image: pgvector/pgvector:pg15

environment:

POSTGRES_USER: postgres

POSTGRES_PASSWORD: postgres

POSTGRES_DB: honcho

ports:

- "5432:5432"

volumes:

- postgres_data:/var/lib/postgresql/data

- ./init.sql:/docker-entrypoint-initdb.d/init.sql

volumes:

postgres_data:

```

### Authentication Configuration

**JWT Authentication:**

```bash theme={null}

# Enable/disable authentication

AUTH_USE_AUTH=false # Set to true for production

# JWT settings (required if AUTH_USE_AUTH is true)

AUTH_JWT_SECRET=your-super-secret-jwt-key

```

**Generate JWT Secret:**

```bash theme={null}

# Generate a secure JWT secret

python scripts/generate_jwt_secret.py

```

### Cache Configuration

Honcho supports Redis caching to improve performance by caching frequently accessed data like peers, sessions, and working representations. Caching also includes lock mechanisms to prevent cache stampede scenarios.

**Redis Cache Settings:**

```bash theme={null}

# Enable/disable Redis caching

CACHE_ENABLED=false # Set to true to enable caching

# Redis connection

CACHE_URL=redis://localhost:6379/0?suppress=false

# Cache namespace and TTL

CACHE_NAMESPACE=honcho # Prefix for all cache keys

CACHE_DEFAULT_TTL_SECONDS=300 # How long items stay in cache (5 minutes)

# Lock settings for preventing cache stampede

CACHE_DEFAULT_LOCK_TTL_SECONDS=5 # Lock duration when fetching from DB on cache miss

```

**When to Enable Caching:**

* High-traffic production environments

* Applications with many repeated reads of the same data

* When you need to reduce database load

**Note:** Caching requires a Redis instance. You can run Redis locally with Docker:

```bash theme={null}

docker run -d -p 6379:6379 redis:latest

```

## LLM Provider Configuration

Honcho supports multiple LLM providers for different tasks. API keys are configured in the `[llm]` section, while specific features use their own configuration sections.

### API Keys

All provider API keys use the `LLM_` prefix:

```bash theme={null}

# Provider API Keys

LLM_ANTHROPIC_API_KEY=your-anthropic-api-key

LLM_OPENAI_API_KEY=your-openai-api-key

LLM_GEMINI_API_KEY=your-gemini-api-key

LLM_GROQ_API_KEY=your-groq-api-key

# OpenAI-compatible endpoints

LLM_OPENAI_COMPATIBLE_API_KEY=your-api-key

LLM_OPENAI_COMPATIBLE_BASE_URL=https://your-openai-compatible-endpoint.com

```

### General LLM Settings

```bash theme={null}

# Default settings for all LLM calls

LLM_DEFAULT_MAX_TOKENS=2500

# Embedding provider (used when EMBED_MESSAGES=true)

LLM_EMBEDDING_PROVIDER=openai # Options: openai, gemini

```

### Feature-Specific Model Configuration

Different features can use different providers and models:

**Dialectic API:**

The Dialectic API provides theory-of-mind informed responses by integrating long-term facts with current context.

```bash theme={null}

# Main dialectic model (default: Anthropic)

DIALECTIC_PROVIDER=anthropic

DIALECTIC_MODEL=claude-sonnet-4-20250514

DIALECTIC_MAX_OUTPUT_TOKENS=2500

DIALECTIC_THINKING_BUDGET_TOKENS=1024 # Only used with Anthropic provider

DIALECTIC_CONTEXT_WINDOW_SIZE=100000 # Maximum context window tokens

# Query generation for dialectic searches

DIALECTIC_PERFORM_QUERY_GENERATION=false # Enable query generation for semantic search

DIALECTIC_QUERY_GENERATION_PROVIDER=groq

DIALECTIC_QUERY_GENERATION_MODEL=llama-3.1-8b-instant

# Semantic search settings

DIALECTIC_SEMANTIC_SEARCH_TOP_K=10 # Number of results to retrieve

DIALECTIC_SEMANTIC_SEARCH_MAX_DISTANCE=0.85 # Maximum distance for relevance

```

**Deriver (Theory of Mind):**

The Deriver is a background processing system that extracts facts from messages and builds theory-of-mind representations of peers.

```bash theme={null}

# LLM settings for deriver

DERIVER_PROVIDER=google

DERIVER_MODEL=gemini-2.5-flash-lite

DERIVER_MAX_OUTPUT_TOKENS=10000

DERIVER_THINKING_BUDGET_TOKENS=1024 # Only used with Anthropic provider

DERIVER_MAX_INPUT_TOKENS=23000 # Maximum input tokens for deriver

# Worker settings

DERIVER_WORKERS=1 # Number of background worker processes

DERIVER_POLLING_SLEEP_INTERVAL_SECONDS=1.0 # Time between queue checks

DERIVER_STALE_SESSION_TIMEOUT_MINUTES=5 # Timeout for stale sessions

# Queue management

DERIVER_QUEUE_ERROR_RETENTION_SECONDS=2592000 # Keep errored items for 30 days

# Working representation settings

DERIVER_WORKING_REPRESENTATION_MAX_OBSERVATIONS=50 # Max observations stored

DERIVER_REPRESENTATION_BATCH_MAX_TOKENS=4096 # Max tokens per batch

```

**Peer Card:**

Peer cards are short, structured summaries of peer identity and characteristics.

```bash theme={null}

# Enable/disable peer card generation

PEER_CARD_ENABLED=true

# LLM settings for peer card generation

PEER_CARD_PROVIDER=openai

PEER_CARD_MODEL=gpt-5-nano-2025-08-07

PEER_CARD_MAX_OUTPUT_TOKENS=4000 # Includes thinking tokens for GPT-5 models

```

**Summary Generation:**

Session summaries provide compressed context for long conversations. Honcho creates two types: short summaries (frequent) and long summaries (comprehensive).

```bash theme={null}

# Enable/disable summarization

SUMMARY_ENABLED=true

# LLM settings for summary generation

SUMMARY_PROVIDER=openai

SUMMARY_MODEL=gpt-4o-mini-2024-07-18

SUMMARY_MAX_TOKENS_SHORT=1000 # Max tokens for short summaries

SUMMARY_MAX_TOKENS_LONG=4000 # Max tokens for long summaries

SUMMARY_THINKING_BUDGET_TOKENS=512 # Only used with Anthropic provider

# Summary frequency thresholds

SUMMARY_MESSAGES_PER_SHORT_SUMMARY=20 # Create short summary every N messages

SUMMARY_MESSAGES_PER_LONG_SUMMARY=60 # Create long summary every N messages

```

### Default Provider Usage

By default, Honcho uses:

* **Anthropic** (Claude) for dialectic API responses

* **Groq** for query generation (fast, cost-effective)

* **Google** (Gemini) for theory of mind derivation

* **OpenAI** (GPT) for peer cards and summarization

* **OpenAI** for embeddings (if `EMBED_MESSAGES=true`)

You only need to set the API keys for the providers you plan to use. All providers are configurable per feature.

## Additional Features Configuration

### Dream Processing

Dream processing consolidates and refines peer representations during idle periods, similar to how human memory consolidation works during sleep.

**Dream Settings:**

```bash theme={null}

# Enable/disable dream processing

DREAM_ENABLED=true

# Trigger thresholds

DREAM_DOCUMENT_THRESHOLD=50 # Minimum documents to trigger a dream

DREAM_IDLE_TIMEOUT_MINUTES=60 # Minutes of inactivity before dream can start

DREAM_MIN_HOURS_BETWEEN_DREAMS=8 # Minimum hours between dreams for a peer

# Dream types to enable

DREAM_ENABLED_TYPES=["consolidate"] # Currently supported: consolidate

# LLM settings for dream processing

DREAM_PROVIDER=openai

DREAM_MODEL=gpt-4o-mini-2024-07-18

DREAM_MAX_OUTPUT_TOKENS=2000

```

### Webhook Configuration

Webhooks allow you to receive real-time notifications when events occur in Honcho (e.g., new messages, session updates).

**Webhook Settings:**

```bash theme={null}

# Webhook secret for signing payloads (optional but recommended)

WEBHOOK_SECRET=your-webhook-signing-secret

# Limit on webhooks per workspace

WEBHOOK_MAX_WORKSPACE_LIMIT=10

```

### Metrics Collection

Enable metrics collection for monitoring Honcho performance and usage.

**Metrics Settings:**

```bash theme={null}

# Enable/disable metrics collection

METRICS_ENABLED=false

# Namespace for metrics (used in metric names)

METRICS_NAMESPACE=honcho

```

## Monitoring Configuration

### Sentry Error Tracking

**Sentry Settings:**

```bash theme={null}

# Enable/disable Sentry error tracking

SENTRY_ENABLED=false

# Sentry configuration

SENTRY_DSN=https://your-sentry-dsn@sentry.io/project-id

SENTRY_RELEASE=2.4.0 # Optional: track which version errors come from

SENTRY_ENVIRONMENT=production # Environment name (development, staging, production)

# Sampling rates (0.0 to 1.0)

SENTRY_TRACES_SAMPLE_RATE=0.1 # 10% of transactions tracked

SENTRY_PROFILES_SAMPLE_RATE=0.1 # 10% of transactions profiled

```

## Environment-Specific Examples

### Development Configuration

**config.toml for development:**

```toml theme={null}

[app]

LOG_LEVEL = "DEBUG"

SESSION_OBSERVERS_LIMIT = 10

EMBED_MESSAGES = false

[db]

CONNECTION_URI = "postgresql+psycopg://postgres:postgres@localhost:5432/honcho_dev"

POOL_SIZE = 5

[auth]

USE_AUTH = false

[cache]

ENABLED = false

[dialectic]

PROVIDER = "anthropic"

MODEL = "claude-sonnet-4-20250514"

PERFORM_QUERY_GENERATION = false

MAX_OUTPUT_TOKENS = 2500

[deriver]

WORKERS = 1

PROVIDER = "google"

MODEL = "gemini-2.5-flash-lite"

[peer_card]

ENABLED = true

PROVIDER = "openai"

MODEL = "gpt-5-nano-2025-08-07"

[summary]

ENABLED = true

PROVIDER = "openai"

MODEL = "gpt-4o-mini-2024-07-18"

MAX_TOKENS_SHORT = 1000

MAX_TOKENS_LONG = 4000

[dream]

ENABLED = true

[webhook]

MAX_WORKSPACE_LIMIT = 10

[metrics]

ENABLED = false

[sentry]

ENABLED = false

```

**Environment variables for development:**

```bash theme={null}

# .env.development

LOG_LEVEL=DEBUG

DB_CONNECTION_URI=postgresql+psycopg://postgres:postgres@localhost:5432/honcho_dev

AUTH_USE_AUTH=false

CACHE_ENABLED=false

# LLM Provider API Keys

LLM_ANTHROPIC_API_KEY=your-dev-anthropic-key

LLM_OPENAI_API_KEY=your-dev-openai-key

LLM_GEMINI_API_KEY=your-dev-gemini-key

```

### Production Configuration

**config.toml for production:**

```toml theme={null}

[app]

LOG_LEVEL = "WARNING"

SESSION_OBSERVERS_LIMIT = 10

EMBED_MESSAGES = true

[db]

CONNECTION_URI = "postgresql+psycopg://honcho_user:secure_password@prod-db:5432/honcho_prod"

POOL_SIZE = 20

MAX_OVERFLOW = 40

[auth]

USE_AUTH = true

[cache]

ENABLED = true

URL = "redis://redis:6379/0"

DEFAULT_TTL_SECONDS = 300

[dialectic]

PROVIDER = "anthropic"

MODEL = "claude-sonnet-4-20250514"

PERFORM_QUERY_GENERATION = false

MAX_OUTPUT_TOKENS = 2500

[deriver]

WORKERS = 4

PROVIDER = "google"

MODEL = "gemini-2.5-flash-lite"

[peer_card]

ENABLED = true

PROVIDER = "openai"

MODEL = "gpt-5-nano-2025-08-07"

[summary]

ENABLED = true

PROVIDER = "openai"

MODEL = "gpt-4o-mini-2024-07-18"

MAX_TOKENS_SHORT = 1000

MAX_TOKENS_LONG = 4000

[dream]

ENABLED = true

PROVIDER = "openai"

MODEL = "gpt-4o-mini-2024-07-18"

[webhook]

MAX_WORKSPACE_LIMIT = 10

[metrics]

ENABLED = true

[sentry]

ENABLED = true

ENVIRONMENT = "production"

TRACES_SAMPLE_RATE = 0.1

PROFILES_SAMPLE_RATE = 0.1

```

**Environment variables for production:**

```bash theme={null}

# .env.production

LOG_LEVEL=WARNING

DB_CONNECTION_URI=postgresql+psycopg://honcho_user:secure_password@prod-db:5432/honcho_prod

# Authentication

AUTH_USE_AUTH=true

AUTH_JWT_SECRET=your-super-secret-jwt-key

# Cache

CACHE_ENABLED=true

CACHE_URL=redis://redis:6379/0

# LLM Provider API Keys

LLM_ANTHROPIC_API_KEY=your-prod-anthropic-key

LLM_OPENAI_API_KEY=your-prod-openai-key

LLM_GEMINI_API_KEY=your-prod-gemini-key

LLM_GROQ_API_KEY=your-prod-groq-key

# Webhooks

WEBHOOK_SECRET=your-webhook-signing-secret

# Monitoring

SENTRY_DSN=https://your-sentry-dsn@sentry.io/project-id

SENTRY_ENVIRONMENT=production

```

## Migration Management

**Running Database Migrations:**

```bash theme={null}

# Check current migration status

uv run alembic current

# Upgrade to latest

uv run alembic upgrade head

# Downgrade to specific revision

uv run alembic downgrade revision_id

# Create new migration

uv run alembic revision --autogenerate -m "Description of changes"

```

## Troubleshooting

**Common Configuration Issues:**

1. **Database Connection Errors**

* Ensure `DB_CONNECTION_URI` uses `postgresql+psycopg://` prefix

* Verify database is running and accessible

* Check pgvector extension is installed

2. **Authentication Issues**

* Set `AUTH_USE_AUTH=true` for production

* Generate and set `AUTH_JWT_SECRET` if authentication is enabled

* Use `python scripts/generate_jwt_secret.py` to create a secure secret

3. **LLM Provider Issues**

* Verify API keys are set correctly

* Check model names match provider specifications

* Ensure provider is enabled in configuration

4. **Deriver Issues**

* Increase `DERIVER_WORKERS` for better performance

* Check `DERIVER_STALE_SESSION_TIMEOUT_MINUTES` for session cleanup

* Monitor background processing logs

This configuration guide covers all the settings available in Honcho. Always use environment-specific configuration files and never commit sensitive values like API keys or JWT secrets to version control.

# Contributing Guidelines

Source: https://docs.honcho.dev/v2/contributing/guidelines

Thank you for your interest in contributing to Honcho! This guide outlines the process for contributing to the project and our development conventions.

## Getting Started

Before you start contributing, please:

1. **Set up your development environment** - Follow the [Local Development guide](https://github.com/plastic-labs/honcho/blob/main/CONTRIBUTING.md#local-development) in the Honcho repository to get Honcho running locally.

2. **Join our community** - Feel free to join us in our [Discord](http://discord.gg/plasticlabs) to discuss your changes, get help, or ask questions.

3. **Review existing issues** - Check the [issues tab](https://github.com/plastic-labs/honcho/issues) to see what's already being worked on or to find something to contribute to.

## Contribution Workflow

### 1. Fork and Clone

1. Fork the repository on GitHub

2. Clone your fork locally:

```bash theme={null}

git clone https://github.com/YOUR_USERNAME/honcho.git

cd honcho

```

3. Add the upstream repository as a remote:

```bash theme={null}

git remote add upstream https://github.com/plastic-labs/honcho.git

```

### 2. Create a Branch

Create a new branch for your feature or bug fix:

```bash theme={null}

git checkout -b feature/your-feature-name

# or

git checkout -b fix/your-bug-fix-name

```

**Branch naming conventions:**

* `feature/description` - for new features

* `fix/description` - for bug fixes

* `docs/description` - for documentation updates

* `refactor/description` - for code refactoring

* `test/description` - for adding or updating tests

### 3. Make Your Changes

* Write clean, readable code that follows our coding standards (see below)

* Add tests for new functionality

* Update documentation as needed

* Make sure your changes don't break existing functionality

### 4. Commit Your Changes

We follow conventional commit standards. Format your commit messages as:

```

type(scope): description

[optional body]

[optional footer]

```

**Types:**

* `feat`: A new feature

* `fix`: A bug fix

* `docs`: Documentation only changes

* `style`: Changes that do not affect the meaning of the code

* `refactor`: A code change that neither fixes a bug nor adds a feature

* `test`: Adding missing tests or correcting existing tests

* `chore`: Changes to the build process or auxiliary tools

**Examples:**

```bash theme={null}

git commit -m "feat(api): add new dialectic endpoint for user insights"

git commit -m "fix(db): resolve connection pool timeout issue"

git commit -m "docs(readme): update installation instructions"

```

### 5. Submit a Pull Request

1. Push your branch to your fork:

```bash theme={null}

git push origin your-branch-name

```

2. Create a pull request on GitHub from your branch to the `main` branch

3. Fill out the pull request template with:

* A clear description of what changes you've made

* The motivation for the changes

* Any relevant issue numbers (use "Closes #123" to auto-close issues)

* Screenshots or examples if applicable

## Coding Standards

### Python Code Style

* Follow [PEP 8](https://www.python.org/dev/peps/pep-0008/) style guidelines

* Use [Black](https://black.readthedocs.io/) for code formatting (we may add this to CI in the future)

* Use type hints where possible

* Write docstrings for functions and classes using Google style docstrings

### Code Organization

* Keep functions focused and single-purpose

* Use meaningful variable and function names

* Add comments for complex logic

* Follow existing patterns in the codebase

### Testing

* Write unit tests for new functionality

* Ensure existing tests pass before submitting

* Use descriptive test names that explain what is being tested

* Mock external dependencies appropriately

### Documentation

* Update relevant documentation for new features

* Include examples in docstrings where helpful

* Keep README and other docs up to date with changes

## Review Process

1. **Automated checks** - Your PR will run through automated checks including tests and linting

2. **Project maintainer review** - A project maintainer will review your code for:

* Code quality and adherence to standards

* Functionality and correctness

* Test coverage

* Documentation completeness

3. **Discussion and iteration** - You may be asked to make changes or clarifications

4. **Approval and merge** - Once approved, your PR will be merged into `main`

## Types of Contributions

We welcome various types of contributions:

* **Bug fixes** - Help us squash bugs and improve stability

* **New features** - Add functionality that benefits the community

* **Documentation** - Improve or expand our documentation

* **Tests** - Increase test coverage and reliability

* **Performance improvements** - Help make Honcho faster and more efficient

* **Examples and tutorials** - Help other developers use Honcho

## Issue Reporting

When reporting bugs or requesting features:

1. Check if the issue already exists

2. Use the appropriate issue template

3. Provide clear reproduction steps for bugs

4. Include relevant environment information

5. Be specific about expected vs actual behavior

## Questions and Support

* **General questions** - Join our [Discord](http://discord.gg/plasticlabs)

* **Bug reports** - Use GitHub issues

* **Feature requests** - Use GitHub issues with the feature request template

* **Security issues** - Please email us privately rather than opening a public issue

## License

By contributing to Honcho, you agree that your contributions will be licensed under the same [AGPL-3.0 License](./license) that covers the project.

Thank you for helping make Honcho better! 🫡

# License

Source: https://docs.honcho.dev/v2/contributing/license

Honcho is licensed under the AGPL-3.0 License. This is copied below for convenience and also present in the

[GitHub Repository](https://github.com/plastic-labs/honcho)

```

GNU AFFERO GENERAL PUBLIC LICENSE

Version 3, 19 November 2007

Copyright (C) 2007 Free Software Foundation, Inc.

Everyone is permitted to copy and distribute verbatim copies

of this license document, but changing it is not allowed.

Preamble

The GNU Affero General Public License is a free, copyleft license for

software and other kinds of works, specifically designed to ensure

cooperation with the community in the case of network server software.

The licenses for most software and other practical works are designed

to take away your freedom to share and change the works. By contrast,

our General Public Licenses are intended to guarantee your freedom to

share and change all versions of a program--to make sure it remains free

software for all its users.

When we speak of free software, we are referring to freedom, not

price. Our General Public Licenses are designed to make sure that you

have the freedom to distribute copies of free software (and charge for

them if you wish), that you receive source code or can get it if you

want it, that you can change the software or use pieces of it in new

free programs, and that you know you can do these things.

Developers that use our General Public Licenses protect your rights

with two steps: (1) assert copyright on the software, and (2) offer

you this License which gives you legal permission to copy, distribute

and/or modify the software.

A secondary benefit of defending all users' freedom is that

improvements made in alternate versions of the program, if they

receive widespread use, become available for other developers to

incorporate. Many developers of free software are heartened and

encouraged by the resulting cooperation. However, in the case of

software used on network servers, this result may fail to come about.

The GNU General Public License permits making a modified version and

letting the public access it on a server without ever releasing its

source code to the public.

The GNU Affero General Public License is designed specifically to

ensure that, in such cases, the modified source code becomes available

to the community. It requires the operator of a network server to

provide the source code of the modified version running there to the

users of that server. Therefore, public use of a modified version, on

a publicly accessible server, gives the public access to the source

code of the modified version.

An older license, called the Affero General Public License and

published by Affero, was designed to accomplish similar goals. This is

a different license, not a version of the Affero GPL, but Affero has

released a new version of the Affero GPL which permits relicensing under

this license.

The precise terms and conditions for copying, distribution and

modification follow.

TERMS AND CONDITIONS

0. Definitions.

"This License" refers to version 3 of the GNU Affero General Public License.

"Copyright" also means copyright-like laws that apply to other kinds of

works, such as semiconductor masks.

"The Program" refers to any copyrightable work licensed under this

License. Each licensee is addressed as "you". "Licensees" and

"recipients" may be individuals or organizations.

To "modify" a work means to copy from or adapt all or part of the work

in a fashion requiring copyright permission, other than the making of an

exact copy. The resulting work is called a "modified version" of the

earlier work or a work "based on" the earlier work.

A "covered work" means either the unmodified Program or a work based

on the Program.

To "propagate" a work means to do anything with it that, without

permission, would make you directly or secondarily liable for

infringement under applicable copyright law, except executing it on a

computer or modifying a private copy. Propagation includes copying,

distribution (with or without modification), making available to the

public, and in some countries other activities as well.

To "convey" a work means any kind of propagation that enables other

parties to make or receive copies. Mere interaction with a user through

a computer network, with no transfer of a copy, is not conveying.

An interactive user interface displays "Appropriate Legal Notices"

to the extent that it includes a convenient and prominently visible

feature that (1) displays an appropriate copyright notice, and (2)

tells the user that there is no warranty for the work (except to the

extent that warranties are provided), that licensees may convey the

work under this License, and how to view a copy of this License. If

the interface presents a list of user commands or options, such as a

menu, a prominent item in the list meets this criterion.

1. Source Code.

The "source code" for a work means the preferred form of the work

for making modifications to it. "Object code" means any non-source

form of a work.

A "Standard Interface" means an interface that either is an official

standard defined by a recognized standards body, or, in the case of

interfaces specified for a particular programming language, one that

is widely used among developers working in that language.

The "System Libraries" of an executable work include anything, other

than the work as a whole, that (a) is included in the normal form of

packaging a Major Component, but which is not part of that Major

Component, and (b) serves only to enable use of the work with that

Major Component, or to implement a Standard Interface for which an

implementation is available to the public in source code form. A

"Major Component", in this context, means a major essential component

(kernel, window system, and so on) of the specific operating system

(if any) on which the executable work runs, or a compiler used to

produce the work, or an object code interpreter used to run it.

The "Corresponding Source" for a work in object code form means all

the source code needed to generate, install, and (for an executable

work) run the object code and to modify the work, including scripts to

control those activities. However, it does not include the work's

System Libraries, or general-purpose tools or generally available free

programs which are used unmodified in performing those activities but

which are not part of the work. For example, Corresponding Source

includes interface definition files associated with source files for

the work, and the source code for shared libraries and dynamically

linked subprograms that the work is specifically designed to require,

such as by intimate data communication or control flow between those

subprograms and other parts of the work.

The Corresponding Source need not include anything that users

can regenerate automatically from other parts of the Corresponding

Source.

The Corresponding Source for a work in source code form is that

same work.

2. Basic Permissions.

All rights granted under this License are granted for the term of

copyright on the Program, and are irrevocable provided the stated

conditions are met. This License explicitly affirms your unlimited

permission to run the unmodified Program. The output from running a

covered work is covered by this License only if the output, given its

content, constitutes a covered work. This License acknowledges your

rights of fair use or other equivalent, as provided by copyright law.

You may make, run and propagate covered works that you do not

convey, without conditions so long as your license otherwise remains

in force. You may convey covered works to others for the sole purpose

of having them make modifications exclusively for you, or provide you

with facilities for running those works, provided that you comply with

the terms of this License in conveying all material for which you do

not control copyright. Those thus making or running the covered works

for you must do so exclusively on your behalf, under your direction

and control, on terms that prohibit them from making any copies of

your copyrighted material outside their relationship with you.

Conveying under any other circumstances is permitted solely under

the conditions stated below. Sublicensing is not allowed; section 10

makes it unnecessary.

3. Protecting Users' Legal Rights From Anti-Circumvention Law.

No covered work shall be deemed part of an effective technological

measure under any applicable law fulfilling obligations under article

11 of the WIPO copyright treaty adopted on 20 December 1996, or

similar laws prohibiting or restricting circumvention of such

measures.

When you convey a covered work, you waive any legal power to forbid

circumvention of technological measures to the extent such circumvention

is effected by exercising rights under this License with respect to

the covered work, and you disclaim any intention to limit operation or

modification of the work as a means of enforcing, against the work's

users, your or third parties' legal rights to forbid circumvention of

technological measures.

4. Conveying Verbatim Copies.

You may convey verbatim copies of the Program's source code as you

receive it, in any medium, provided that you conspicuously and

appropriately publish on each copy an appropriate copyright notice;

keep intact all notices stating that this License and any

non-permissive terms added in accord with section 7 apply to the code;

keep intact all notices of the absence of any warranty; and give all

recipients a copy of this License along with the Program.

You may charge any price or no price for each copy that you convey,

and you may offer support or warranty protection for a fee.

5. Conveying Modified Source Versions.

You may convey a work based on the Program, or the modifications to

produce it from the Program, in the form of source code under the

terms of section 4, provided that you also meet all of these conditions:

a) The work must carry prominent notices stating that you modified

it, and giving a relevant date.

b) The work must carry prominent notices stating that it is

released under this License and any conditions added under section

7. This requirement modifies the requirement in section 4 to

"keep intact all notices".

c) You must license the entire work, as a whole, under this

License to anyone who comes into possession of a copy. This

License will therefore apply, along with any applicable section 7

additional terms, to the whole of the work, and all its parts,

regardless of how they are packaged. This License gives no

permission to license the work in any other way, but it does not

invalidate such permission if you have separately received it.

d) If the work has interactive user interfaces, each must display

Appropriate Legal Notices; however, if the Program has interactive

interfaces that do not display Appropriate Legal Notices, your

work need not make them do so.

A compilation of a covered work with other separate and independent

works, which are not by their nature extensions of the covered work,

and which are not combined with it such as to form a larger program,

in or on a volume of a storage or distribution medium, is called an

"aggregate" if the compilation and its resulting copyright are not

used to limit the access or legal rights of the compilation's users

beyond what the individual works permit. Inclusion of a covered work

in an aggregate does not cause this License to apply to the other

parts of the aggregate.

6. Conveying Non-Source Forms.

You may convey a covered work in object code form under the terms

of sections 4 and 5, provided that you also convey the

machine-readable Corresponding Source under the terms of this License,

in one of these ways:

a) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by the

Corresponding Source fixed on a durable physical medium

customarily used for software interchange.

b) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by a

written offer, valid for at least three years and valid for as

long as you offer spare parts or customer support for that product

model, to give anyone who possesses the object code either (1) a

copy of the Corresponding Source for all the software in the

product that is covered by this License, on a durable physical

medium customarily used for software interchange, for a price no

more than your reasonable cost of physically performing this

conveying of source, or (2) access to copy the

Corresponding Source from a network server at no charge.

c) Convey individual copies of the object code with a copy of the

written offer to provide the Corresponding Source. This

alternative is allowed only occasionally and noncommercially, and

only if you received the object code with such an offer, in accord

with subsection 6b.

d) Convey the object code by offering access from a designated

place (gratis or for a charge), and offer equivalent access to the

Corresponding Source in the same way through the same place at no

further charge. You need not require recipients to copy the

Corresponding Source along with the object code. If the place to

copy the object code is a network server, the Corresponding Source

may be on a different server (operated by you or a third party)

that supports equivalent copying facilities, provided you maintain

clear directions next to the object code saying where to find the

Corresponding Source. Regardless of what server hosts the

Corresponding Source, you remain obligated to ensure that it is

available for as long as needed to satisfy these requirements.

e) Convey the object code using peer-to-peer transmission, provided

you inform other peers where the object code and Corresponding

Source of the work are being offered to the general public at no

charge under subsection 6d.

A separable portion of the object code, whose source code is excluded

from the Corresponding Source as a System Library, need not be

included in conveying the object code work.

A "User Product" is either (1) a "consumer product", which means any

tangible personal property which is normally used for personal, family,

or household purposes, or (2) anything designed or sold for incorporation

into a dwelling. In determining whether a product is a consumer product,

doubtful cases shall be resolved in favor of coverage. For a particular

product received by a particular user, "normally used" refers to a

typical or common use of that class of product, regardless of the status

of the particular user or of the way in which the particular user

actually uses, or expects or is expected to use, the product. A product

is a consumer product regardless of whether the product has substantial

commercial, industrial or non-consumer uses, unless such uses represent

the only significant mode of use of the product.

"Installation Information" for a User Product means any methods,

procedures, authorization keys, or other information required to install

and execute modified versions of a covered work in that User Product from

a modified version of its Corresponding Source. The information must

suffice to ensure that the continued functioning of the modified object

code is in no case prevented or interfered with solely because

modification has been made.

If you convey an object code work under this section in, or with, or

specifically for use in, a User Product, and the conveying occurs as

part of a transaction in which the right of possession and use of the

User Product is transferred to the recipient in perpetuity or for a

fixed term (regardless of how the transaction is characterized), the

Corresponding Source conveyed under this section must be accompanied

by the Installation Information. But this requirement does not apply

if neither you nor any third party retains the ability to install

modified object code on the User Product (for example, the work has

been installed in ROM).

The requirement to provide Installation Information does not include a

requirement to continue to provide support service, warranty, or updates

for a work that has been modified or installed by the recipient, or for

the User Product in which it has been modified or installed. Access to a

network may be denied when the modification itself materially and

adversely affects the operation of the network or violates the rules and

protocols for communication across the network.

Corresponding Source conveyed, and Installation Information provided,

in accord with this section must be in a format that is publicly

documented (and with an implementation available to the public in

source code form), and must require no special password or key for

unpacking, reading or copying.

7. Additional Terms.

"Additional permissions" are terms that supplement the terms of this

License by making exceptions from one or more of its conditions.

Additional permissions that are applicable to the entire Program shall

be treated as though they were included in this License, to the extent

that they are valid under applicable law. If additional permissions

apply only to part of the Program, that part may be used separately

under those permissions, but the entire Program remains governed by

this License without regard to the additional permissions.

When you convey a copy of a covered work, you may at your option

remove any additional permissions from that copy, or from any part of

it. (Additional permissions may be written to require their own

removal in certain cases when you modify the work.) You may place

additional permissions on material, added by you to a covered work,

for which you have or can give appropriate copyright permission.

Notwithstanding any other provision of this License, for material you

add to a covered work, you may (if authorized by the copyright holders of

that material) supplement the terms of this License with terms:

a) Disclaiming warranty or limiting liability differently from the

terms of sections 15 and 16 of this License; or

b) Requiring preservation of specified reasonable legal notices or

author attributions in that material or in the Appropriate Legal

Notices displayed by works containing it; or

c) Prohibiting misrepresentation of the origin of that material, or

requiring that modified versions of such material be marked in

reasonable ways as different from the original version; or

d) Limiting the use for publicity purposes of names of licensors or

authors of the material; or

e) Declining to grant rights under trademark law for use of some

trade names, trademarks, or service marks; or

f) Requiring indemnification of licensors and authors of that

material by anyone who conveys the material (or modified versions of

it) with contractual assumptions of liability to the recipient, for

any liability that these contractual assumptions directly impose on

those licensors and authors.

All other non-permissive additional terms are considered "further

restrictions" within the meaning of section 10. If the Program as you

received it, or any part of it, contains a notice stating that it is

governed by this License along with a term that is a further

restriction, you may remove that term. If a license document contains

a further restriction but permits relicensing or conveying under this

License, you may add to a covered work material governed by the terms

of that license document, provided that the further restriction does

not survive such relicensing or conveying.

If you add terms to a covered work in accord with this section, you

must place, in the relevant source files, a statement of the

additional terms that apply to those files, or a notice indicating

where to find the applicable terms.

Additional terms, permissive or non-permissive, may be stated in the

form of a separately written license, or stated as exceptions;

the above requirements apply either way.

8. Termination.

You may not propagate or modify a covered work except as expressly

provided under this License. Any attempt otherwise to propagate or

modify it is void, and will automatically terminate your rights under

this License (including any patent licenses granted under the third

paragraph of section 11).

However, if you cease all violation of this License, then your

license from a particular copyright holder is reinstated (a)

provisionally, unless and until the copyright holder explicitly and

finally terminates your license, and (b) permanently, if the copyright

holder fails to notify you of the violation by some reasonable means

prior to 60 days after the cessation.

Moreover, your license from a particular copyright holder is

reinstated permanently if the copyright holder notifies you of the

violation by some reasonable means, this is the first time you have

received notice of violation of this License (for any work) from that

copyright holder, and you cure the violation prior to 30 days after

your receipt of the notice.

Termination of your rights under this section does not terminate the

licenses of parties who have received copies or rights from you under

this License. If your rights have been terminated and not permanently

reinstated, you do not qualify to receive new licenses for the same

material under section 10.

9. Acceptance Not Required for Having Copies.

You are not required to accept this License in order to receive or

run a copy of the Program. Ancillary propagation of a covered work

occurring solely as a consequence of using peer-to-peer transmission

to receive a copy likewise does not require acceptance. However,

nothing other than this License grants you permission to propagate or

modify any covered work. These actions infringe copyright if you do

not accept this License. Therefore, by modifying or propagating a

covered work, you indicate your acceptance of this License to do so.

10. Automatic Licensing of Downstream Recipients.

Each time you convey a covered work, the recipient automatically

receives a license from the original licensors, to run, modify and

propagate that work, subject to this License. You are not responsible

for enforcing compliance by third parties with this License.

An "entity transaction" is a transaction transferring control of an

organization, or substantially all assets of one, or subdividing an

organization, or merging organizations. If propagation of a covered

work results from an entity transaction, each party to that

transaction who receives a copy of the work also receives whatever

licenses to the work the party's predecessor in interest had or could

give under the previous paragraph, plus a right to possession of the

Corresponding Source of the work from the predecessor in interest, if

the predecessor has it or can get it with reasonable efforts.

You may not impose any further restrictions on the exercise of the

rights granted or affirmed under this License. For example, you may

not impose a license fee, royalty, or other charge for exercise of

rights granted under this License, and you may not initiate litigation

(including a cross-claim or counterclaim in a lawsuit) alleging that