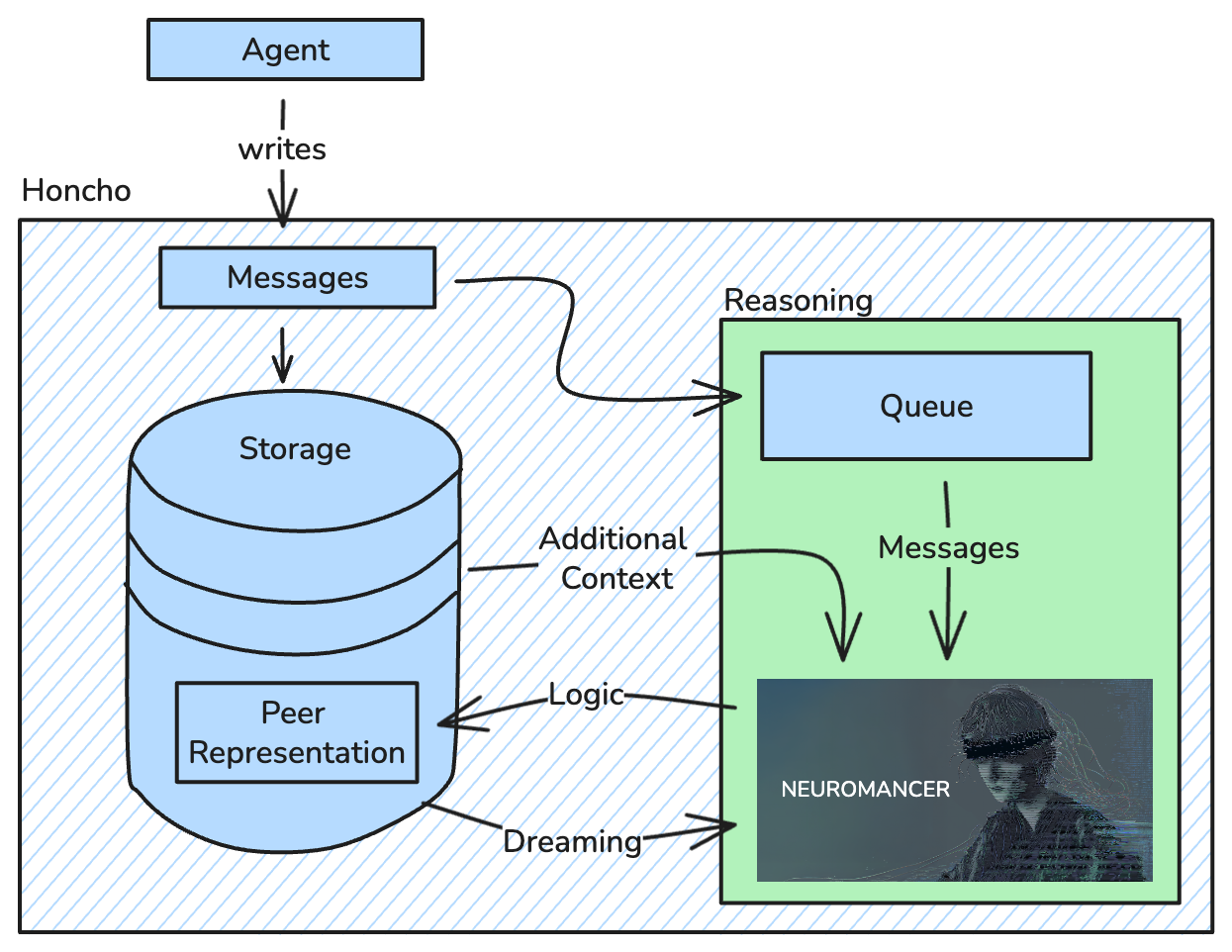

Honcho is a memory system that reasons. You can read more on the philosophy behind the approach here, but practically speaking, the system runs inference on data in the background to produce the highest quality context for simulating statefulness. This document explains why reasoning is necessary and how Honcho implements it.Documentation Index

Fetch the complete documentation index at: https://docs.honcho.dev/llms.txt

Use this file to discover all available pages before exploring further.

If you’d like to experience this methodology first-hand, try out Honcho Chat—an interface to your personal memory. Read more here!

Why Reasoning?

Traditional RAG systems treat memory as static storage—they retrieve what was explicitly said when semantically similar queries appear. Other solutions take an opinion for you on what’s important to store, whether through structured facts in databases or predefined knowledge graphs. Honcho takes a different approach: we extract all latent information by reasoning about everything, so it’s there when you need it. Our job is to produce the most robust reasoning possible—it’s your job as a developer to decide what’s relevant for your use case. We extract this latent information through formal logic. Formal logical reasoning is AI-native—LLMs perform the rigorous, compute-intensive thinking that humans struggle with, instantly and consistently. This unlocks insights that are only accessible by rigorously thinking about your data, generating new understanding that goes beyond simple recall.Formal Logic Framework

Honcho’s memory system is powered by custom models trained to perform formal logical reasoning. The system extracts what was explicitly stated, draws certain conclusions from those, identifies patterns across multiple conclusions, and infers the simplest explanations for behavior. Why formal logic specifically? LLMs are uniquely well-suited for this reasoning task—it’s well-represented in the pretraining data. LLMs can maintain consistent reasoning across thousands of conclusions without cognitive fatigue or belief resistance—which is extremely hard for humans to do reliably. The outputs are also composable, meaning logical conclusions can be stored, retrieved, and combined programmatically for dynamic context assembly. Here’s an example of a data structure the reasoning models generate:How It Works

When you write messages to Honcho, they’re stored immediately and enqueued for background processing. Reasoning asynchronously ensures fast writes while still providing rich reasoning capabilities. Messages are stored immediately without blocking, and session-based queues maintain chronological consistency so reasoning tasks affecting the same peer representation are always processed in order. The reasoning outputs—conclusions, summaries, peer cards—are stored as part of peer representations, indexed in vector collections for retrieval.

Token Batching

Rather than running inference on every individual message, Honcho accumulates messages in the queue and processes them as a batch once the total token count of pending messages for a given peer representation crosses a threshold—roughly 1,000 tokens at the current batch size. This keeps ingestion costs down, since Honcho charges based on reasoning passes, and ensures each pass has a meaningful amount of context to work with. At ~1,000 tokens the batch comfortably fits in the context window of any modern LLM, so no content is lost. If a user sends several short messages in a row (e.g., “yes”, “ok”, “sounds good”), those messages sit in the queue until enough content has accumulated. Once the threshold is met, the full batch is processed together in a single reasoning call.This batching only applies to representation tasks (conclusion extraction). Summary and dream tasks have their own scheduling logic and are not subject to the token threshold.

Balances & Design Choices

Off-the-shelf LLMs can perform formal logical reasoning, but they aren’t optimized for it. Honcho uses custom models trained specifically for logical rigor (following formal reasoning rules rather than plausible-sounding text), structured output (consistent JSON schema with premises and conclusions), and efficiency (smaller, faster models tuned for this specific task). This allows Honcho to reason more reliably and at lower cost than general-purpose frontier LLMs. The approach balances quality with practical constraints. Custom models are smaller and cheaper to run, scaffolded conclusions are more token-efficient than raw conversation history, and we batch where appropriate to optimize update frequency. Honcho’s reasoning capabilities are actively being improved. Current areas of development include enhanced inductive and abductive reasoning, multi-hop and temporal reasoning, and expanded file types and modalities. The system is designed to be extensible—new reasoning capabilities can be added without breaking existing functionality.Next Steps

Without exhaustive reasoning, you’re stuck with surface-level retrieval or someone else’s opinion on what matters. You can’t effectively simulate statefulness if you’re not reasoning about everything in the present—coherence plummets, trust falls, and users churn. Don’t leave key information on the table. Use Honcho to give your agents the context they need to reconstruct the past as comprehensively as possible and maintain coherence—for your use case.Get an API Key

Sign up for the Honcho platform and start building

Quickstart

Get started with your first integration

Architecture

See how reasoning fits into Honcho’s overall architecture

Peer Representations

Learn how reasoning produces peer representations